How to Create Video Ads with AI in 2026

I tested 11 different setups for AI video ad production over the past four months. Most looked great in demos, but only three actually survived real client work.

Hey guys, I'm Dora, and here's what nobody tells you upfront: the biggest time sink isn't generating the video.

It's everything after — fixing hook pacing, re-timing captions that drift by half a second, cutting a 47-second draft down to a 28-second YouTube bumper without killing the rhythm.

That's where the hours disappear. And that's also where an AI workflow either proves itself… or falls apart.

This isn't a tool list. It's the actual path from brief to ad-ready video.

Why Marketers Use AI Video Ad Generators

AI video production costs have dropped by about 91%—from roughly $4,500 per minute with traditional methods to around $400 using AI workflows.

According to Wyzowl's State of Video Marketing 2026, 91% of businesses use video, and 63% of marketers already use AI to create or edit content. This isn't a small change—it's a fundamental shift.

AI video is booming—generation volume grew 840% from Jan 2024 to Jan 2026, and 78% of marketing teams now use it regularly. So the question isn't whether to use AI, but how to use it effectively.

For ads, the real time-saver isn't ideation or storytelling—it's variant generation: turning one proven concept into multiple versions and formats, fast.

What AI Can and Can't Do Today

AI's Role in Hook, Visual, and Variant Generation

Feed in a script, a product URL, or a rough reference video, and a capable tool will hand you a first draft — auto-captions, basic cut, rough audio sync — in under 20 minutes. That draft isn't launch-ready. But it's a real starting point, not a blank timeline.

Hook generation has gotten notably better. Tools that pull from viral pattern libraries can suggest opening structures based on what's already performing in your category. I tested this on e-commerce product content — AI-generated hooks had a re-edit rate of around 40% in week one, which dropped to about 22% by week four once I dialed in the prompting. Still not zero, but meaningfully better than starting from scratch every time.

Where AI genuinely removes labor: captions, B-roll placement on talking-head footage, basic audio enhancement, format conversion. On captioned vs. uncaptioned video ads, industry data consistently shows captioned formats outperform silent autoplay by a wide margin on skip rate — and with Meta's autoplay defaulting to muted across feed placements, auto-captions aren't just a time-saver, they're a performance requirement. AI handles the 80%. You fix the 20% that matters.

Where Human Editing Still Wins — Pacing, CTA, Compliance

AI still struggles with pacing, so every video needs a review pass. Small choices—like when to cut, how long to hold a shot, or timing before a CTA—can significantly impact completion rates. I end up re-editing CTA timing about 35% of the time.

It also can't handle compliance. In regulated industries like health or finance, a human must review all claims—visuals, captions, and implied messaging included. Platform rules are stricter in 2026, and rejected ads cost more than the time saved skipping review.

Workflow — Brief to Ad-Ready Video

My actual workflow looks like this. Five steps, roughly 45–60 minutes for a single ad including one human review pass. Down from about 2.5 hours six months ago.

Step 1: Brief and Angle

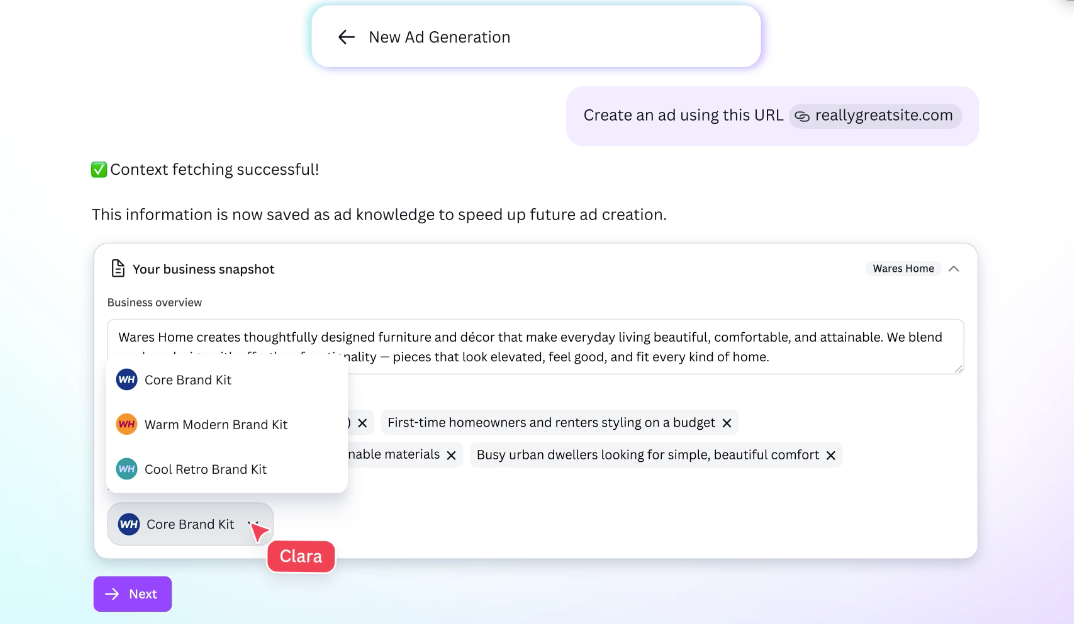

Before using any tools, I write a short brief that answers four questions: what the audience wants before seeing the ad, what they currently believe that is wrong, what single change the product creates, and what action I want them to take next. I keep it under 150 words and focus on being specific, because the quality of the brief sets the ceiling for everything that follows.

Step 2: Script and Hook Generation

With the brief ready, I create three to five hook variations to explore different angles. I write the first draft of the script myself, then use AI to refine it by removing filler, keeping sentences under 12 words, and eliminating passive voice.

Step 3: AI Visual and Clip Generation

I input the script, product footage or URL, and one or two reference ads. For talking-head videos, AI suggests B-roll automatically. For product-focused content, AI handles cutting and assembly. Fully AI-generated visuals work best for abstract or lifestyle scenes, while real footage is better when realism matters. The result is usually about 60–75% complete.

Step 4: Editing, Captions, CTA Placement

I review the video manually, focusing on CTA timing, caption accuracy, audio levels, and brand consistency. I often fix 15–20% of captions. I place the CTA at the point of highest intent, which for a 30-second ad is usually around seconds 22–25.

Step 5: Platform-Specific Variants

I adapt one video into three formats: 9:16 for TikTok and Reels, 1:1 for feed placements, and 16:9 for YouTube. I also check safe zones to ensure important text and visuals stay out of the bottom 20% in vertical formats.

Ad Formats by Platform

Meta — Feed, Reels, Stories

Meta's Andromeda ad retrieval system, which began rolling out in late 2024, rewards creative diversity — different angles, different opening frames — over minor variations of the same concept. The algorithm identifies which creative resonates with which audience segments; your job is to give it enough material. Marketing Brew's April 2026 coverage of Meta's AI ad system documents how media buyers are adapting their upload strategies in response.

Format specs: Feed uses 1:1 or 4:5, 15–30 seconds, first two seconds must hold audio-off. Reels: 9:16, hook in the first three seconds. Stories: 9:16, 15 seconds maximum, single message, one CTA.

On AI disclosure for Meta (as of 2026): Per Meta's official AI disclosure policy, advertisers must actively disclose when ad creative contains AI-generated or significantly AI-altered visual or audio content. Meta's systems may also apply an "AI info" label automatically via detection. Disclosure is required for photorealistic AI-generated people, product renders, or scenes — not for AI-assisted captions, color grading, or headline optimization. When in doubt, disclose. Failing to disclose when you should is a larger operational risk than the label itself.

TikTok — In-feed, Spark Ads, UGC Ads

TikTok's first-second rule is real. If the opening frame doesn't create an interruption — visual, audio, or text — the viewer is already gone. I test opening frames in isolation before committing to a full edit: three options, same first second, pick the one that creates the most friction.

Spark Ads (boosting organic creator content) typically outperform polished AI-generated equivalents for conversion intent. The perceived authenticity does work that production quality can't replicate.

On AI disclosure for TikTok (as of 2026): Per TikTok's official AI-generated content requirements (updated February 2026), content won't be demoted solely because the AIGC label is enabled. Disclosure is required when AI substantially alters or generates visual or audio content — not for captions, scripts, or hashtag suggestions. TikTok integrated C2PA Content Credentials detection in January 2025 and can auto-label AI content regardless of self-disclosure. If the system labels it before you do, you cannot remove that label. Self-disclose through Ads Manager to control how it appears.

YouTube — Shorts, In-stream, Bumper

Shorts: treat like TikTok — 9:16, under 60 seconds, hook in the first second.

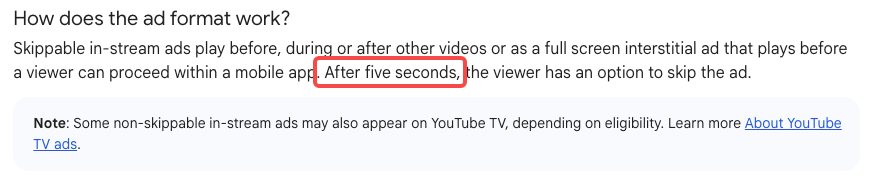

In-stream (skippable): viewers can skip after five seconds. The goal isn't to stop the skip — it's to target people who would skip but won't because the opening frame is immediately relevant to what they were watching or searching. Per Google's YouTube ad format specs, skippable in-stream ads can run from 12 seconds to six minutes, with CPV billing kicking in at the 30-second mark or on interaction.

Bumper ads: six seconds maximum, non-skippable, one idea, one message. AI is genuinely good at pulling bumpers from longer cuts — trim to the most visually striking six seconds, add a single text CTA. The format is sold on CPM and works well for retargeting audiences who've already seen your longer creative.

A/B Testing Variants at Scale

This is the real unlock in AI video: not cheaper production, but more variations.

My current testing setup:

3 hooks

2 bodies (same offer, different angles)

2 CTAs

I launch the 4 most distinct combinations, run them for 72 hours, cut the bottom 2, and iterate on what works.

Core rule: every variant must test a clear hypothesis. Not "different hook," but "humor vs. urgency for a cold top-of-funnel audience."

According to Wyzowl 2026 data, 71% of creators use AI for first drafts, then refine manually. Fully automated workflows create volume but little insight. Human-in-the-loop workflows turn AI into a fast test-and-learn engine.

Common Mistakes That Kill AI Ad Performance

Using AI output as final output. Every AI-generated video needs a human review pass. Not because AI is bad at this — it isn't — but because the last 20% is judgment, not generation.

Building one good ad instead of five testable ones. The algorithmic ad environment on both Meta and TikTok rewards creative velocity. A well-structured AI workflow makes the five-ads approach cost-effective. Use it.

Ignoring the audio-off scenario. Check every ad with audio off before it ships. If the visual story doesn't hold without sound, fix it. Most platform feed placements default to muted autoplay.

Skipping the disclosure check. The 2026 cross-platform AI content labeling requirements differ significantly across Meta, TikTok, and YouTube. Understand which elements require disclosure on each platform before producing, not after an ad gets rejected.

Optimizing for production quality over hook quality. A visually polished ad with a weak hook will underperform a rough ad with a strong hook. I've run this experiment enough times to stop being surprised by it. Spend your human review time on the hook and CTA first.

FAQ

Is AI ad content allowed by Meta and TikTok? Yes, with disclosure requirements on both platforms as of 2026. The rules apply to AI-generated or substantially AI-altered visual and audio content — not to captions, scripts, or text overlays. Meta's Advantage+ suite auto-labels creative it generates internally; third-party AI tools require manual advertiser disclosure. TikTok auto-detects via C2PA metadata and will label without consent if your content triggers it. When in doubt on either platform, disclose.

How much does an AI video ad cost to produce? AI tools cost about $20–$50 per month and can produce many videos, bringing the tool cost to under $1 per video. Including 15–30 minutes of human review, the total cost is usually $15–$80 per ad. Traditional production typically costs $800–$5,000+.

Can AI create UGC-style ads that look authentic? Sometimes, but real UGC usually performs better. AI UGC works best for early testing or quickly adapting existing content.

Do I still need a video editor? Yes. AI handles basic editing tasks, but humans are still needed for pacing, messaging, compliance, and brand consistency.

Conclusion

Pick one platform, one ad format, one offer. Write the brief properly — the four questions above. Generate three hook variations. Build one full ad using AI for the rough cut and spend your human time on pacing and CTA. Export platform variants. Run it.

Then do that ten more times.

The teams winning with AI video in 2026 aren't the ones with the most sophisticated setups. They're the ones running the most iterations. AI makes iteration cheaper. Use it for that.

Previous Posts: