AI Video Generators Reddit Actually Recommends (2026)

Hey everyone, Dora here. I spent three days doing something I probably shouldn't admit: reading Reddit threads about AI video tools instead of actually making videos.

Not procrastinating. Genuinely stuck. The tool I'd been using for four months had just bumped its credit pricing by 40% with zero warning. So I did what any reasonable person does—went to Reddit and read what people were actually saying. Not the vendor blogs. The actual threads, sorted by "controversial," with the heated arguments and the people who'd burned $30 in credits for one usable clip.

Here's what I found.

What Reddit Communities Value

Free Tier Limits and Paywall Friction

The free plan question dominates r/aivideo more than any other topic. Not because everyone's broke—it's because a free tier is the clearest signal of whether a company trusts its own product.

Reddit's benchmark right now: Kling's daily-refreshing free credits. Sixty-six credits per day, replenishing every 24 hours. Runway gives you 125 credits once and that's it—a one-time trial, not a sustainable tier. That distinction matters. One is "try it properly," the other is "demo and buy." Pika gets mentioned as a third option for budget users, with multiple daily generations without paying.

The paywall friction discussion leads into a second complaint: watermarks on free-tier output. Reddit users call this "pay to evaluate." It generates consistent negative sentiment, especially for creators who need to test the tool against their specific content format before committing.

Output Quality vs. Cost Per Usable Second

The quality conversation has shifted. Two years ago Reddit was debating "can AI make video at all." Now it's "does this pass the 30-frame-scroll test on TikTok."

The metric that keeps appearing is something users call Cost Per Usable Second. You're not paying for generations—you're paying for seconds of footage you can actually cut into a timeline. Tools with inconsistent results get labeled "slot machines." You insert credits and hope for a jackpot.

By this metric, Veo 3.1 looks expensive at $0.50/second but gets rehabilitated by 4K output at 60fps. Runway Gen-4 gets criticized for burning $15+ in credits just to get a character to walk across a frame without artifacts. Kling at v2.6 holds up well—the v3.0 pricing backlash (90-120 credits per clip) pushed many users back to v2.6, which is still what most threads recommend for volume work.

Commercial Use Rules

The TOS landscape is a mess and changes constantly. The consistent advice from power users: read the commercial use clause before generating anything for a client. The catch is usually on generated likeness rights and content using competitor brand assets. US-based tools (Runway, Pika) get praised for clearer documentation. Kling and Hailuo get flagged by enterprise users for data handling questions. For most independent creators, not a blocker. For regulated industries, worth checking.

Most Recommended Tools on Reddit

Tools Frequently Upvoted in r/aivideo

Kling AI (v2.6) is the most consistently upvoted tool for general-purpose social content in 2026. Made by Kuaishou Technology—the Chinese short-video platform—it went global in 2024 and the motion physics are better than they have any right to be at this price. Cloth physics, hair dynamics, realistic movement. Daily free credits are real and usable (roughly 1-2 videos/day).

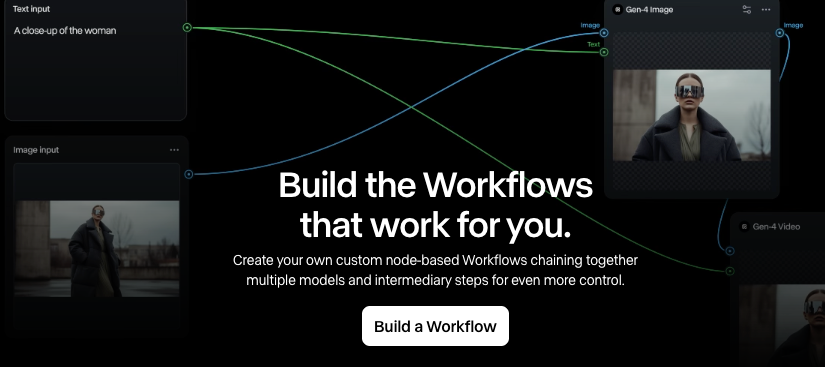

Runway Gen-4 has a different kind of following—people who need character consistency across scenes. Gen-4 References lets you upload multiple character images and maintain appearance across different shots. For ad campaigns with a recurring character, or any project where a face needs to look the same in clip 7 as it did in clip 1, Runway is the recommendation. It's also genuinely faster: 30-90 seconds per generation vs. Kling's 5-10 minutes in Professional mode. The $95/month Unlimited plan is praised by high-volume users as the only real unlimited option. For 50+ videos per month, it becomes cheaper per clip than credit-based alternatives.

Hailuo (MiniMax) keeps showing up as what one thread called "the sleeper hit mainstream blogs ignore." Praised for fluid character movement and anime/stylized motion—if you're making AMV content or anything 2D animation-adjacent, it comes up repeatedly. Capped at 720p in most plans, but users who prioritize motion energy over pixel count (upscaling later with Topaz) swear by it. Budget-friendly compared to either Kling or Runway.

Veo 3.1 (Google) gets a more complicated reception. It scores second only to Runway Gen-4 on the Artificial Analysis video benchmark, and it's the only major tool where you generate a video and get synchronized audio included—no post-production audio step. The catch: it lives inside Google's ecosystem, mostly accessed through Gemini Pro at $20/month. The January 2026 update added native 9:16 vertical output and 4K at 60fps. For creators already in Google's infrastructure, it integrates well. For everyone else, it's adding a dependency.

Tools Upvoted in r/StableDiffusion

Wan 2.6 is described in local deployment threads as the superior model for professional motion. The complication: ongoing community anxiety that Alibaba is closing source code for newer versions—threads call this "Wan greed." Most users are sticking with the current open version while it exists.

LTX 13B is the community hope. Fully open-source, faster than Wan, and it now supports audio generation. The criticism: prompt-sensitive, struggles with complex motion. It's the tinkerer's choice; Wan is the production choice. For first-time local inference, LTX is where most threads recommend starting.

Tools Mentioned for TikTok/Shorts

Kling dominates for volume and cost. Native vertical output, realistic motion, daily free credits. Pika for stylized or motion-graphics-adjacent content—the visual signature is distinct and works for specific aesthetics, not photorealism. HeyGen whenever talking-head or avatar content comes up. Its lip-sync quality is acknowledged even by people who don't use it as the category standard.

Comparison Table — Reddit Community Picks

Tool | Best For | Free Tier | Reddit Sentiment |

Kling AI v2.6 | Volume, motion realism, social clips | 66 credits/day (refreshes) | Consistently positive; v3.0 pricing complaint |

Runway Gen-4 | Character consistency, speed, pro workflows | 125 credits (one-time) | Respected; expensive at low volume |

Hailuo / MiniMax | Stylized motion, anime content | Limited | Underrated, positive |

Veo 3.1 (Google) | Integrated audio, 4K, cinematic B-roll | Via Gemini trial | Positive; ecosystem lock-in concern |

Pika | Creative/stylized, free generation | Generous daily | Good for casual use |

HeyGen | Talking-head, lip-sync, avatar | Limited | Category standard |

Wan 2.6 (local) | Open-source, professional motion | Free (self-hosted) | Trusted; closure anxiety |

LTX 13B (local) | Open-source, fast, experimentation | Free (self-hosted) | Hopeful; prompt sensitivity |

Tools Reddit Users Warn Against

Credit burn with no usable output. The biggest complaint across multiple tools. The "slot machine" framing—some tools deliver high variance results where you burn 4-5 generations for one usable clip. Runway Gen-4 gets this specifically around character locomotion. Users report burning $15+ trying to get a character to cross a room without glitching.

"Unlimited" that isn't. Runway's Unlimited plan offers unlimited Explore Mode but not guaranteed fast processing—peak times slow significantly. Not hidden, but users who don't read closely get surprised.

TOS changes with no notice. Happened with multiple tools in 2025. A tool is fine for commercial use at signup; six months later the ToS changed. Community advice: screenshot your acceptance date, check quarterly. Smaller tools that got acquired are most frequently cited.

Safety filters that flag normal content. US-based tools (Sora, Veo) get consistent criticism for over-aggressive filtering—terms like "shooting a scene" triggering content warnings. Kling and Hailuo are repeatedly noted as more permissive for non-political creative work. This is explicitly cited as a reason users switch.

From Reddit Picks to Real Workflow

From Generation to Finished Short

The workflow most recommended for TikTok/Shorts at volume:

Step 1: Character/asset generation. Handle this separately—Midjourney or Flux for reference images, locked to a seed. Most creators skip this and regret it when their character's face changes between clips.

Step 2: Video generation. Upload reference image to Kling (physics-heavy shots) or Runway (cross-scene character consistency). Keep prompts minimal, motion settings conservative to reduce face drift.

Step 3: Audio and captions. If you used Veo 3.1, audio is included. Everyone else adds it in post. For captions—dedicated captioning tools still beat AI video platform auto-captions for accuracy. CapCut's caption tool gets mentioned often.

Step 4: Format and edit. 9:16 vertical, hook in the first two seconds. Tools like Runway's editor handle some of this within the generation platform—not perfect, but it saves context switching.

The honest reality: generation is faster than it's ever been. Post-generation is still where most time goes. Reddit's experienced users are consistent on this: "AI compressed the hardest parts of production" is accurate. "AI replaced production" is not.

FAQ

Most-upvoted free tool on Reddit? Kling AI. The daily-refreshing credits are genuinely usable, not a one-time trial. For local inference, LTX 13B is the r/StableDiffusion recommendation.

Are Reddit picks safe for commercial use? Most paid plans permit it, but the terms vary and change. Check current ToS before any client project—not the version you remember from signup.

Why do Redditors prefer open-source? No credits, no censorship, no subscription cancellation risk. The barrier is hardware—RTX 4090 minimum for reasonable speed. The Wan 2.1 GitHub is where the community tracks development. For everyone without capable hardware, the math doesn't work unless you're producing at serious volume.

Do recommendations age quickly? Yes. Faster than almost any other tech category. Kling v3.0's pricing backlash happened within weeks of launch. The threads that stay accurate longest focus on evaluation frameworks—how to calculate CPUS, how to judge motion quality—rather than specific tool rankings.

Final Recommendations

For short-form content at volume, cost-conscious: Kling AI v2.6. Best motion quality-to-price ratio available. Start with the free tier, test your actual format before paying.

For character consistency across clips or ad campaign work: Runway Gen-4. The reference system is genuinely different. Worth the higher price for professional output.

For talking-head or avatar content: HeyGen. No other tool matches it at this specific job.

For local inference, no subscriptions: Wan 2.6 for production quality, LTX 13B for speed and experimenting. The LTX Video project has active community support.

One thing that doesn't show up in most Reddit threads: the tool that fits your workflow beats the tool with the highest benchmark score. I've watched creators switch to "better" tools and lose 40% of their output velocity because the new tool doesn't fit how they actually work. The right model is the fastest one you'll still be using under a deadline.

Free tiers exist. Use them.

Previous Posts: