CutClaw: AI Agent That Edits Long Videos into Shorts

Last Tuesday I had nine raw clips sitting in a folder, a deadline, and genuine dread about opening Premiere. The footage had been sitting there for four days — Dora's classic move: shoot everything, edit nothing.

That's when CutClaw's arXiv paper showed up in my feed: an autonomous multi-agent system that claims to turn hours of raw footage into music-synced short-form cuts from a single text prompt.

I've been burned by enough "AI editor" demos to know the drill. Looks clean in the trailer, falls apart on real footage. So I didn't bookmark it. I cloned the repo.

Three days and a lot of conda errors later — here's what I actually found.

What Is CutClaw

CutClaw is an open-source, autonomous video editing system built on a multi-agent framework. You give it raw footage, an audio track, and a single text instruction describing the editing style. It handles the rest — scripting, shot selection, pacing, and rendering.

It's a research project out of GVCLab, published on arXiv as a preprint paper. Not a SaaS. Not a browser tool. A Python-based pipeline you run locally or on a GPU server.

The Multi-Agent Framework: Playwriter, Editor, Reviewer

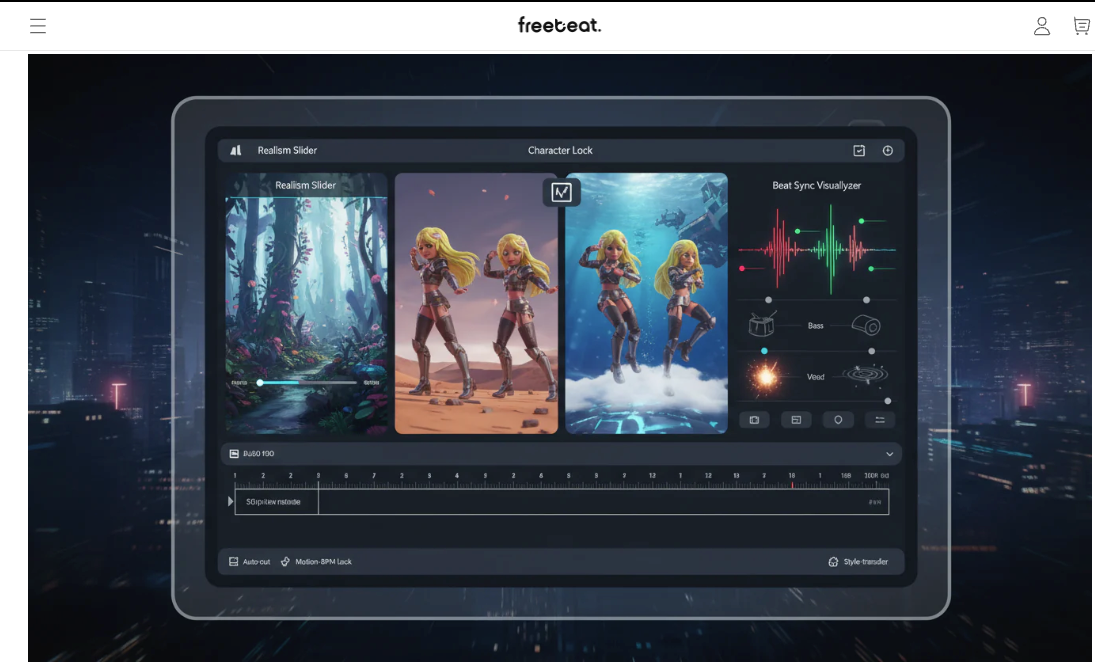

This is the part that actually caught my attention. CutClaw doesn't just clip footage — it runs three specialized agents in sequence:

Playwriter Agent: Acts as the global narrative planner. It reads the music structure — beats, energy shifts, sections — and uses that as a temporal anchor. Then it maps your instruction against the abstracted video scenes to produce a story plan.

Editor Agent: Executes the plan at the shot level. It localizes precise clip timestamps that match the narrative structure, constrained by musical rhythm.

Reviewer Agent: The quality gate. It evaluates each selected clip against three criteria — plot relevance, visual aesthetics, and instruction adherence. Weak frames get rejected before the final render.

The system mimics a professional post-production workflow through a collaborative, coarse-to-fine hierarchy. That's the key phrase. It's not a single model guessing what looks good. It's three agents with distinct roles checking each other's work.

What It Actually Produces — Music-Synced Short-Form Cuts

The output is a short video where every cut lands on a beat. Not approximately. The system extracts musical beat positions, downbeat positions, and energy signals, then aligns shot transitions to those anchor points. The beat detection component uses madmom, a well-established Python audio MIR library used in academic music analysis research, for this rhythm-aware segmentation.

The framing adjusts automatically too. If your source footage is horizontal and you're targeting vertical formats, content-aware cropping identifies the primary subject and reframes the shot.

How CutClaw Works

Hierarchical Decomposition of Long Footage

The reason most AI tools choke on long video is context length. An hour of footage at reasonable sampling rates blows through any model's context window. CutClaw's first move is to solve this problem before anything else.

It first deconstructs raw video and audio into structured captions, then uses a multi-agent pipeline to plan shots, select clip timestamps, and validate final quality before rendering.

Specifically, it runs a "bottom-up multimodal footage deconstruction" pass — turning continuous video into structured semantic units (scenes, sections) and audio into musical segments. This compressed representation is what the agents actually reason over, not the raw frames.

Music Beat Detection and Shot Alignment

The audio analysis module handles beat, downbeat, pitch, and energy extraction simultaneously. The role is ASR plus music-structure parsing — beat/downbeat, pitch, energy — for music-aware segmentation. Those timestamps become the skeleton the Playwriter builds around. Every narrative beat in the script maps to a musical moment.

This is meaningfully different from "just cut on the beat" tools. The Playwriter uses music structure — not just individual beats — to decide which type of footage should go where. A high-energy drop might call for an action shot. A quiet bridge might anchor a character moment.

Content-Aware Cropping for Vertical Formats

This runs during the final render stage. CutClaw identifies the core subject in each selected clip and adjusts the aspect ratio to fit platform requirements. According to the project's GitHub repository, the system supports .mp4 and .mkv source files, with GPU-accelerated video decoding recommended for practical speed.

Can Creators Actually Use It Right Now

Here's where I want to be straight with you.

Open-Source Status and Setup Requirements

CutClaw is fully open-source. You can clone the repo today. Setup requires Python 3.12, conda, and a GPU-accelerated environment for anything beyond short clips. There's a Streamlit UI at

localhost:8501But this is a research prototype. The setup isn't a three-click install. If you've never touched conda or set up a Python GPU environment, plan for a couple hours of troubleshooting before you get your first successful run.

API Dependencies and Cost Considerations

CutClaw calls external multimodal models for the agent reasoning steps. The paper references Qwen3-VL, Gemini, and MiniMax as tested backends. The full stack uses MiniMax, Gemini3, Qwen3-VL, and Qwen3-Omni as the underlying models. That means every editing job incurs API costs — how much depends on footage length and which model you configure. For long-form footage, this adds up. It's not a tool you'd run casually on every raw clip.

The Hugging Face paper page for CutClaw has early discussion from the research community if you want to track what models are working well in practice.

What's Missing vs. a Production-Ready Tool

No caption generation. No subtitle overlay. No text animations. No native TikTok or Reels export presets. The output is a rendered video file — clean, rhythm-synced, properly cropped. Everything else you'd add in post is your problem.

If you're used to tools where captions auto-generate and you're done, CutClaw is a different kind of tool. It handles the structural edit. The finishing layer is still manual.

What This Means for Short-Form Video Workflows

Auto-Editing Raw Footage — Where It Fits

The honest use case right now: content operators with large libraries of raw footage and music tracks they already own.

Think travel vloggers sitting on 40GB of trip footage. Event videographers with 6-hour recordings. Brands with product shoot archives. For these use cases, the value proposition is real — one prompt, one render, one rough cut that's actually cut-to-music rather than randomly assembled.

CutClaw was designed to bridge the gap between simple clip assembly and instruction-aligned, music-driven storytelling. That's an accurate description of what it does when it works.

Post-Generation Editing Still Needed — Captions, Branding, Platform Adaptation

What CutClaw produces is a first cut, not a published piece. You'll still need to add captions (critical for most short-form platforms), any branded lower-thirds or end cards, platform-specific formatting, and audio mixing if the source dialogue competes with the music track.

For reference, Adobe Premiere Pro's auto-transcription and tools like DaVinci Resolve handle the caption and finishing layer well — the workflow would be CutClaw for structural assembly, then your existing NLE for the last 20%.

CutClaw Limitations and Trade-Offs

API Latency and Rate Limits

Each run makes multiple sequential API calls — footage decomposition, narrative planning, shot selection, review. On long source material, this pipeline can take significantly longer than real-time. If your model provider has rate limits (they all do), you'll hit them on batch processing.

No Caption, Subtitle, or Text Overlay Support

Worth repeating because it's easy to overlook: CutClaw does not generate captions. For TikTok or Reels where captions drive retention, this means the CutClaw output is an intermediate file, not a final deliverable.

Output Quality Depends on Source Footage

The Reviewer agent applies aesthetic criteria, but it's working with what you give it. Poorly lit footage, shaky handheld shots, or heavily compressed source files will limit what the system can select. Garbage in, rhythm-synced garbage out.

FAQ

Q1: Is CutClaw free to use?

The software itself is open-source and free. Running it costs money because the agent reasoning steps call external multimodal model APIs (Qwen3-VL, Gemini, MiniMax). API costs scale with footage length. There's no hosted free tier — you're paying your own API bills.

Q2: What models does CutClaw support?

The paper tests Qwen3-VL, Qwen3-Omni, Gemini3, and MiniMax. The system is configurable — you can swap model backends depending on what API access you have. Performance may vary across models; the paper's benchmark results were generated with specific model configurations.

Q3: Can CutClaw export for TikTok or Reels?

It will output a vertically cropped video, but there's no dedicated export preset with TikTok or Reels metadata. The content-aware cropping handles aspect ratio. Resolution and bitrate settings are yours to configure. Platform-specific requirements (file size caps, codec preferences) need to be handled in post.

Q4: Does CutClaw add captions or text overlays?

No. CutClaw focuses on structural editing — shot selection, pacing, music synchronization. Caption generation, subtitle rendering, and text overlays are not part of the pipeline. You'll need a separate tool for that layer.

Q5: How does CutClaw compare to manual editing?

Manual editing gives you full creative control and supports every finishing element (captions, graphics, color). CutClaw gives you a music-synced rough cut from raw footage without touching a timeline. The trade-off is setup complexity, API costs, and the post-processing still required. For high-volume workflows with consistent footage types, CutClaw could replace the most repetitive part of the assembly process. For polished, branded content, it's a starting point — not a replacement.

I'm still not sure where this lands for most creators. The architecture is genuinely impressive for a research prototype. The gap between "impressive demo" and "in my workflow tomorrow" is still significant — mostly around setup complexity and the missing finishing layer. But the underlying approach — agents that reason about narrative and music structure simultaneously — is the right direction.

Whether it gets there as a research project or someone builds a polished wrapper around it first, that's the question I'm watching.

Previous posts: