GPT Image 2: What It Means for Video Creators

GPT Image 2 has been in my timeline three times in the last 48 hours. That frequency usually means one of two things: either it's genuinely useful, or someone spent a lot on distribution. I've learned not to tell the difference until I've actually looked at what the thing does.

Here's the short version before we go any further: this is an image model, not a video model. If you came here wondering whether GPT Image 2 replaces your video workflow — it doesn't. But for specific parts of the upstream work, the improvements are real enough to pay attention to.

What GPT Image 2 Actually Is

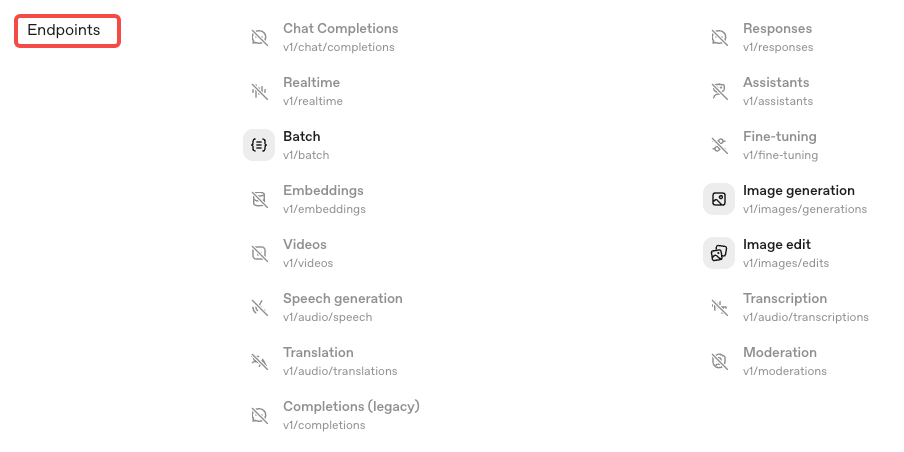

OpenAI launched ChatGPT Images 2.0 on April 21, 2026. The underlying model — accessible via the API as

gpt-image-2The model snapshot is

gpt-image-2-2026-04-21ChatGPT Images 2.0 vs. gpt-image-2 API — Same Model, Different Access

Same model. Two access layers. In ChatGPT, it shows up as ChatGPT Images 2.0. In the API, it's

gpt-image-2Launch Access — Plus, Team, Enterprise, Free Tier, API

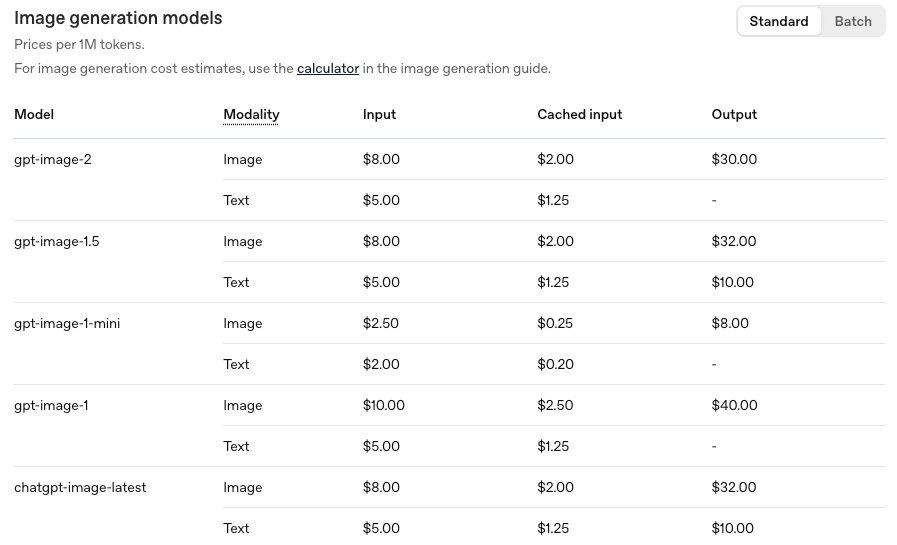

Basic access rolls out to all users including free tier. Advanced features — the reasoning and multi-image generation — require Plus ($20/month), Pro, or Business. API access is live with pricing dependent on output quality and resolution. Don't use the per-image numbers circulating on X right now. Check the official OpenAI pricing page directly — it includes an image generation calculator for estimates.

The Five Capabilities Video Creators Should Care About

I went in pretty skeptical. The demo looked better than real life — that's always true. But a few things held up under closer inspection.

99% Text Rendering — Thumbnails and On-Screen Titles

This is the improvement getting the most attention, and it's the one that actually holds up for short-form work.

Previous GPT Image models were unreliable on text inside images. Garbled words, wrong letters, inconsistent spacing — anyone who's tried to generate a thumbnail with readable copy knows the drill. Per OpenAI's launch post, gpt-image-2 reaches over 99% accuracy on text rendering. Community testing from LM Arena's earlier canary period confirmed the pattern: signs, overlays, multi-word strings, stylized title cards — all substantially more reliable.

For video creators, this is the practical unlock: thumbnails with readable text, on-screen title cards, product annotation frames — all of these become viable to generate rather than design manually.

Higher Resolution Support — 2K Source Frames for I2V

The model supports up to 2K resolution, with 4K available in API beta. Per The New Stack's launch coverage, outputs above 2K are noted as potentially inconsistent in some cases.

Why this matters for video: if you're using still images as source frames for image-to-video models, resolution is a real constraint. Cleaner, higher-res source frames give downstream video models more to work with. This isn't a direct improvement to your video output — but it removes one upstream bottleneck.

Multilingual Typography — Non-English Creators and Markets

The model now handles text rendering in Japanese, Korean, Chinese, Hindi, and Bengali. Not just "it won't garble the characters" — actual localized rendering with correct glyphs and spacing, per OpenAI's launch details.

If you're producing content for multiple markets or working with clients who need localized assets, this changes what's practical to generate. Previously, non-Latin text in AI-generated images was essentially unusable. Now it's reportedly functional.

Multi-Image-from-One-Prompt — Ad Variants and Storyboards

Up to eight coherent images from a single prompt. For short-form producers, the structural use case is clear: generate a set of thumbnail variants or storyboard frames in one pass instead of iterating one image at a time.

I'm still in the middle of testing this at volume. The structural use case holds — I just haven't run enough batches to know where the consistency breaks.

Watermarking and C2PA — Platform Compliance Implications

That's the part that actually matters if you're producing commercial content.

GPT Image 2 outputs embed C2PA Content Credentials — a cryptographically signed metadata standard that major platforms now read automatically. TikTok integrated C2PA detection in January 2025 and now scans all uploaded media for it. If your AI-generated thumbnail or source frame carries C2PA metadata, TikTok, Instagram, and YouTube may automatically apply an "AI-generated" label regardless of your disclosure choices.

This isn't inherently a problem. Properly labeled AI content remains eligible for monetization on TikTok under current rules. But if you're using GPT Image 2 outputs in commercial ad creatives and you want control over how labeling appears, understanding how C2PA Content Credentials interact with platform detection is now part of the job.

What GPT Image 2 Does Not Do

Sales page says "new era of image generation." Reality said something else when I looked for what it actually won't do.

Still an Image Model — No Video Generation

This cannot be stated clearly enough. GPT Image 2 generates still images. It does not generate video, animate sequences, or produce motion. If you came here because you saw "GPT Image 2 for video creators" and assumed that meant video — that's a framing problem with how it's being covered, not a capability of the model.

Motion, Camera, Physics Live Downstream

OpenAI acknowledges the model still struggles with tasks requiring a coherent physical-world model — origami guides, objects on reversed or angled surfaces, fine repetitive detail. For video creators, the relevant implication is simpler: any motion, camera movement, or temporal consistency has to come from a separate image-to-video model downstream. GPT Image 2 gives you a better source frame. What you do with that frame is a different tool's job.

Where It Fits in a Short-Form Video Workflow

Here's where I plug it into a production stack — or at least where it plausibly fits.

Upstream — Source Frame Generation

The practical insertion point is upstream of your video generation step:

Generate thumbnail candidates with readable text

Create storyboard reference frames for I2V prompting

Produce localized versions of on-screen title cards

Build ad variant sets for A/B testing across hooks

Downstream — Still Needs I2V, Captioning, Platform Adaptation

GPT Image 2 output is a static image. The rest of the short-form workflow — motion, captions, audio, platform sizing, revision loops — doesn't change. You still need an I2V model to turn source frames into video, a captioning layer, audio processing, and whatever editing environment you're already using.

Three steps from idea to publishable short-form video is still not three steps. GPT Image 2 makes one of those steps faster and more reliable. That's useful. That's also all it is.

Early Pricing Signal for Creators

Don't use the per-image numbers circulating on X and Reddit right now. As of April 21, OpenAI's public pricing surface maps to token-based billing. The OpenAI API pricing page is the only source worth trusting for current numbers.

What is confirmed: pricing varies by output quality and resolution. High-res outputs cost more. Free-tier users get basic access. Advanced features require a paid plan.

What to Watch in the First Weeks

Known gaps: Fine or repetitive visual detail still exceeds fidelity limits in some cases. Labels and part diagrams may need manual review. Outputs above 2K are API beta — inconsistent results flagged by OpenAI itself.

Policy: C2PA metadata in outputs means automated platform labeling is possible even without self-disclosure. If you're using GPT Image 2 for commercial ad creatives, understand how C2PA interacts with platform detection before you scale. TikTok's enforcement on unlabeled AI content increased 340% in the latter half of 2025. That's existing policy applied to a new tool.

Platform reception: Still early. The model has been live for less than 24 hours at the time of writing. Real-world performance data on whether text rendering holds at high volume, whether C2PA triggers automatic labeling in practice, and whether 2K output quality is consistent enough for production use — none of that exists yet. Come back in two weeks.

FAQ

Is GPT Image 2 free? Basic access is rolling out to all ChatGPT users including free tier. Advanced features — native reasoning, multi-image generation — require Plus, Pro, or Business. API access is token-billed.

Can I use outputs commercially on TikTok/Reels? Properly labeled AI-generated content can be monetized on TikTok under current rules. The complication is that C2PA metadata in GPT Image 2 outputs may trigger automatic labeling by the platform regardless of your disclosure choices. Factor that in for commercial content.

Does it make videos? No. GPT Image 2 generates still images. Motion requires a separate image-to-video model downstream.

Is the watermark removable? C2PA Content Credentials live in image metadata, not as a visible pixel watermark. They can be stripped by re-saving or converting the file — but doing so to avoid platform disclosure requirements puts you at policy risk. Platforms are scanning for this metadata.

Bottom Line for Short-Form Creators

This is where most tools fall apart — the gap between what they promise and what they actually change about daily production.

GPT Image 2 closes a specific gap: reliable text in images, at higher resolution, across more languages, with batch generation. For thumbnail production, source frame creation, and localized asset generation, those are real improvements worth testing.

It doesn't change your video workflow. It improves one upstream step in it.

Worth testing if you produce at volume and text accuracy in images has been a consistent friction point. Not the workflow transformation the distribution would suggest.

I could be wrong about this part — I've only run it for a day. I'll update when I have more data.

Previous Posts: