HappyHorse vs Seedance 2.0: Which AI Video Model Is Better for Short Video?

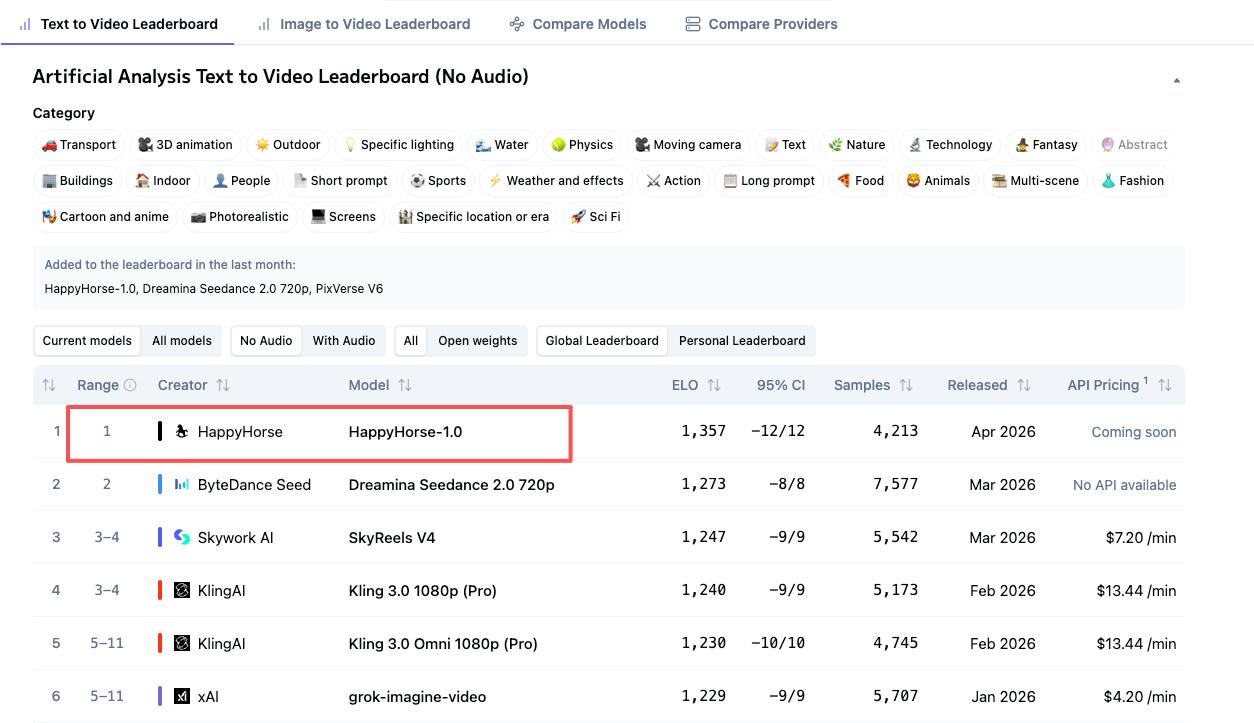

There's a name at the top of the Artificial Analysis Video Arena leaderboard right now that wasn't there a week ago: HappyHorse-1.0. No team announcement. No launch blog. Just an Elo score of 1,333 in text-to-video (no audio) — 60 points ahead of where Seedance 2.0 had been sitting, comfortably, for weeks.

I track this leaderboard. A 60-point Elo gap isn't noise — it means HappyHorse is winning roughly 58–59% of head-to-head blind comparisons. That's real. But here's the thing that most roundups aren't telling you: the leaderboard question and the "which one do I actually use tomorrow" question are two completely different questions. This comparison is about the second one.

What you'll get from this piece: a clear breakdown of motion quality, edit-readiness, audio performance, vertical output, pricing, and which creator type is better served by each model — including honest caveats about what we still don't know.

HappyHorse vs Seedance 2.0 at a Glance

Dimension | ||

Max Resolution | 1080p (self-reported) | 2K (2048×1080) |

Aspect Ratios | 16:9, 9:16, 4:3, 3:4, 21:9, 1:1 | 16:9, 9:16, 4:3, 3:4, 21:9, 1:1 |

Vertical (9:16) native | Yes (claimed) | Yes |

Native Audio | Yes (joint generation) | Yes (joint generation) |

Lip-sync languages | 7 (EN, ZH, JP, KR, DE, FR + Cantonese) | 8+ languages |

Max clip length | 5–8s | 15s |

T2V Elo (no audio) | 1,333 (#1) | 1,273 (#2) |

I2V Elo (no audio) | 1,392 (#1) | 1,355 (#2) |

T2V Elo (with audio) | 1,205 (#2) | 1,219 (#1) |

Public API access | ❌ Not yet | ❌ Not yet (CapCut rolling out) |

Free tier | Yes (browser demo) | Yes (225 daily credits via Dreamina) |

Pricing (paid) | Not published | ~$9.60/month (Dreamina basic) |

Open source | Claimed — weights pending | No |

Leaderboard Rankings Side by Side

On the Artificial Analysis Video Arena, which uses blind user voting rather than lab self-reporting, HappyHorse-1.0 currently leads both the text-to-video category (Elo 1,333) and image-to-video category (Elo 1,392) without audio. Seedance 2.0 holds the #1 spot in both categories with audio, sitting at Elo 1,219 (T2V) and Elo 1,162 (I2V).

The gap with audio is narrow — 14 points in T2V, just 1 point in I2V — which is close enough that it could shift as more votes come in.

One thing worth keeping in mind: Seedance 2.0 has accumulated over 7,500 vote samples in the T2V category, while HappyHorse's sample count isn't publicly available yet. Elo scores for newly added models are more volatile than established ones. Translation: Seedance's numbers are battle-tested. HappyHorse's are fresh.

Motion Quality and Visual Realism

This is where HappyHorse's blind test performance becomes interesting — and where the community is divided.

Users who tested both reported visible gaps between HappyHorse-1.0 and Seedance 2.0 in character details and dynamic coherence, with skeptics questioning whether Elo score alone tells the full story. A reasonable read: HappyHorse may have been optimized to perform well in blind evaluation scenarios — slightly better facial stability, slightly better audio-visual alignment, slightly more pleasing imagery — without necessarily being a stronger model at the architectural level.

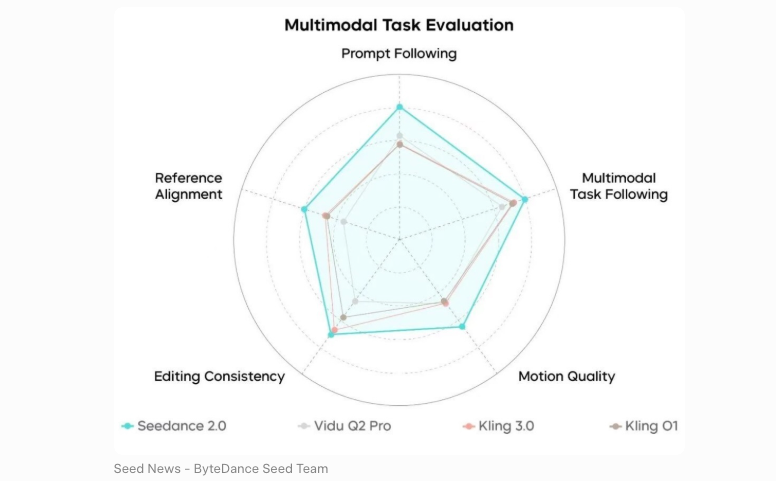

Seedance 2.0's motion quality is well-documented at this point. It features exceptional motion stability with audio-video joint generation, and supports full director-level control over lighting, shadow, camera movement, and character performance.

HappyHorse's self-reported motion story is built around its unified architecture. The 15-billion parameter model uses a 40-layer self-attention Transformer with 4 modality-specific layers on each end and 32 shared layers — designed for single-stream processing with per-head gating for stable training. Whether that translates to better real-world motion quality than Seedance remains unverified by independent testing.

Practical verdict: For short clips where you need motion that holds over 5–8 seconds, both models are competitive. Seedance has the longer track record. HappyHorse has the better blind votes — for now.

How Edit-Ready Is the Output?

This is the part most benchmark roundups skip. The Elo score doesn't tell you how many minutes you'll spend fixing the clip before you can post it.

Resolution and Aspect Ratio

Seedance 2.0 outputs video at up to 2K resolution (2048×1080) at 24fps, across six aspect ratios: 16:9, 9:16, 4:3, 3:4, 21:9, and 1:1. Vertical 9:16 is natively supported, which matters a lot for TikTok and Reels workflows.

HappyHorse claims native 1080p support across the same six aspect ratios (per the official site), but the technical specifications on the primary domain have not been independently verified by any third party as of April 8, 2026. The 1080p claim appears on secondary sites, not confirmed through downloadable weights or API testing. Flag that before building a workflow around it.

Trimming, Captioning, and Reformatting

Seedance 2.0 is already integrated into CapCut. ByteDance confirmed that Dreamina Seedance 2.0 is rolling out in CapCut, allowing creators to draft, edit, and sync video and audio content using prompts, images, or reference videos — with clips up to 15 seconds across six aspect ratios. If you're already working inside CapCut's ecosystem, the handoff from generation to trim-and-caption is near-zero friction.

HappyHorse gives you a browser-generated clip. You download it, bring it into your editor, and proceed normally. There's no native post-processing layer yet. That's not a dealbreaker — it's just more steps.

Audio and Lip-Sync

Both models generate audio natively in the same pass as video — this is a meaningful architectural difference from older tools that bolt audio on afterward.

HappyHorse 1.0 jointly processes text, video, and audio tokens within a single unified Transformer, supporting lip-sync across seven languages: English, Mandarin, Cantonese, Japanese, Korean, German, and French.

Seedance 2.0 uses a unified multimodal audio-video joint generation architecture, supporting text, image, audio, and video inputs simultaneously — with what ByteDance describes as cinematic-grade audio-visual synchronization.

The key difference: when audio is included in the comparison, Seedance 2.0 edges HappyHorse by 14 Elo points in T2V and 1 point in I2V — suggesting its audio-visual pairing is slightly more polished in head-to-head blind voting. If audio quality is a priority in your clips, Seedance is the safer bet right now.

Access, Pricing, and Availability

This is where the comparison gets lopsided fast.

Seedance 2.0 has a working access path for international creators. Dreamina (ByteDance's overseas creative platform) was released internationally around February 24, 2026, offering 225 daily free tokens shared across all Dreamina tools, with paid plans starting at approximately $9.60/month for the basic Dreamina membership. A phased CapCut rollout began in Brazil, Indonesia, Malaysia, Mexico, the Philippines, Thailand, and Vietnam, with more markets being added over time.

HappyHorse has no public API. As of April 8, 2026, both the GitHub and Model Hub links on the primary site say "coming soon," with no downloadable weights, no documented pricing, and no production-ready access available outside of a browser demo.

The open-source claim is worth noting but not yet actionable: the site states that the base model, distilled model, super-resolution model, and inference code have all been released — but this hasn't been verified since no weights are actually downloadable.

Best Fit by Creator Type

Solo Creators (TikTok / Reels / Shorts)

Use Seedance 2.0. The CapCut integration means you can generate, trim, and caption inside one tool. HappyHorse's browser demo is functional for testing, but there's no production workflow built around it yet.

Brand Campaign Teams

Evaluate both, deploy Seedance 2.0. Seedance 2.0 supports uploading up to 9 images, 3 videos (15 seconds total), and 3 audio files simultaneously, with reference-based consistency for faces, clothing, and brand elements across the clip. That multimodal input depth is useful for controlled brand scenarios. HappyHorse's equivalent capabilities are unverified at time of writing.

E-Commerce and Product Video

Seedance 2.0, with caveats. ByteDance notes CapCut is particularly suited for cooking recipes, fitness tutorials, business and product overviews, and action-focused content — categories where AI video has historically struggled. Important caveat: Seedance 2.0's safety restrictions currently block video generation from images containing real faces, which affects any product video featuring human talent.

Limitations of Both Models

HappyHorse-1.0:

No public API, no downloadable weights, no documented pricing

Self-reported technical specs (15B parameters, 1080p output) unverified by third parties

Requires an H100 GPU to run locally, making individual deployment impractical for most creators

Clip length limited to 5–8 seconds — short even by short-form standards

Team identity and organizational backing unconfirmed

Seedance 2.0:

Global developer API not yet publicly available (expected Q2 2026 per multiple sources)

Free tier tokens are shared across all Dreamina tools, with each failed generation consuming full credits — experienced users recommend budgeting 10–20% overhead for retries

Safety restrictions currently block real human face references, limiting some creator use cases

US access remains limited due to ongoing IP-related review

FAQ

Which has better motion quality for short clips?

On blind user voting, HappyHorse-1.0 currently scores higher in the no-audio categories. But community testing shows mixed results — some testers found Seedance 2.0 more consistent on character details. The Elo lead is real; whether it holds as sample sizes grow is the open question. For verified, reliable motion quality today, Seedance 2.0 is the safer choice.

Can I export vertical video from both?

Yes, both support 9:16 natively. Seedance 2.0's vertical output through Dreamina and CapCut is confirmed and tested. HappyHorse claims 9:16 support on its official site, but this has not been independently verified through downloadable weights or API access as of April 8, 2026.

Which is cheaper right now?

Seedance 2.0 is the only one with a published price. Dreamina basic starts at approximately $9.60/month. HappyHorse has a free browser demo but no documented paid tier, API pricing, or subscription plan. You cannot currently build a paid workflow around HappyHorse.

Which output is easier to edit in post?

Seedance 2.0, primarily because of its CapCut integration. You generate and edit in the same environment. HappyHorse outputs a clip you download and import manually. Both give you a video file — but Seedance's ecosystem significantly reduces the steps between generation and publication.

Can I use both for commercial content?

Seedance 2.0: yes, on paid Dreamina plans. Commercial licensing is included in Jimeng's paid plans and Dreamina subscription tiers. HappyHorse: the official site mentions commercial usage rights in some secondary documentation, but this cannot be confirmed until weights, pricing, and terms of service are publicly available. Do not use HappyHorse for commercial production until those documents exist.

Conclusion

The leaderboard answer and the creator answer are different.

HappyHorse-1.0 currently leads the Artificial Analysis Text to Video Arena without audio, and Seedance 2.0 720p takes #1 in the with-audio category at Elo 1,219. That's the benchmark picture.

The practical picture: Seedance 2.0 has a working access path, documented pricing, CapCut integration, multimodal input, 15-second clips, and a track record of thousands of blind votes. HappyHorse has a browser demo, unverified specs, no public weights, and no production API — but genuinely impressive blind test results that are hard to dismiss.

If you're building a workflow today: Seedance 2.0. If you're watching what becomes accessible in the next 60–90 days: HappyHorse is worth tracking. The moment weights drop and independent testing becomes possible, this comparison changes.

I'll update this when either model ships a production API. In the meantime — use what you can actually run.

Previous posts: