LTX 2.3 vs WAN 2.2: Best Open-Source AI Video in 2026?

Hey, it’s Dora here!

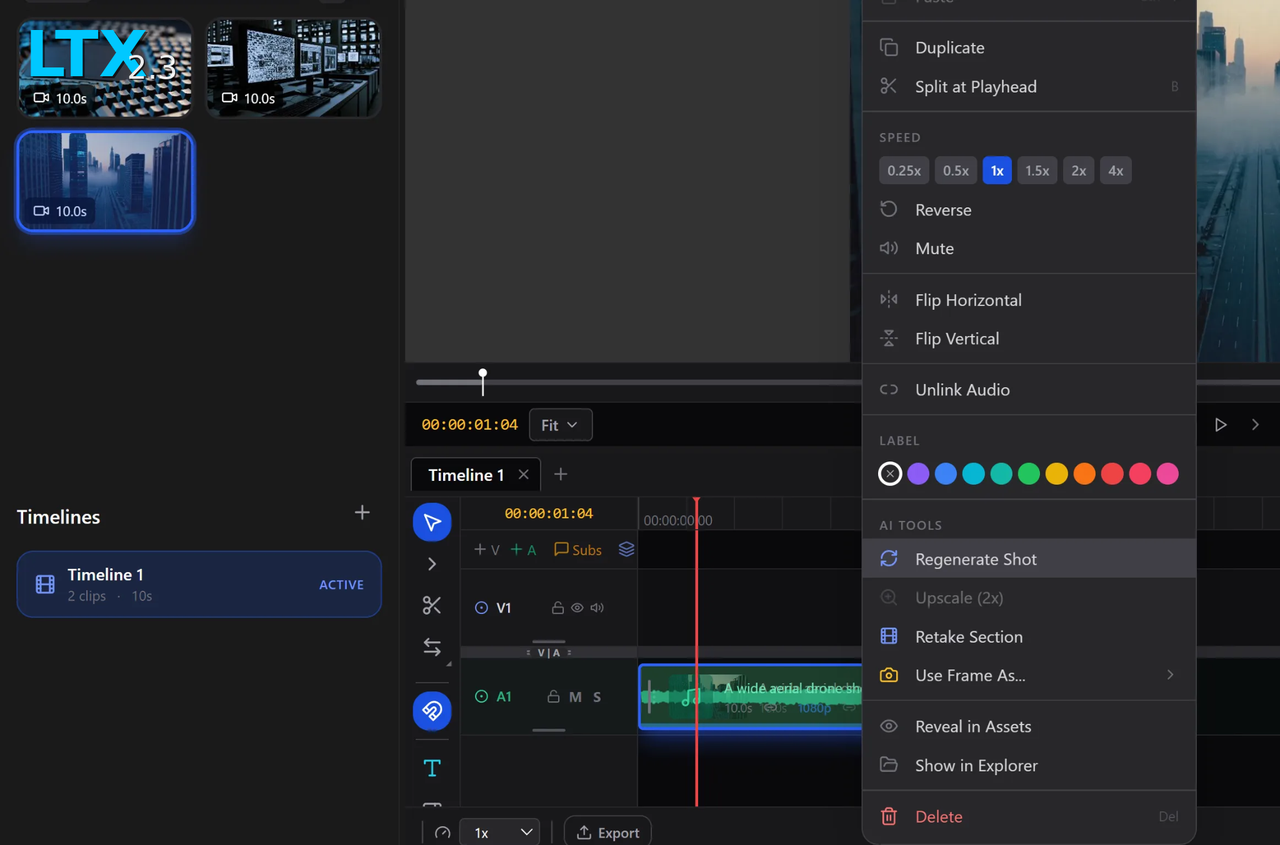

Last Tuesday, I was re-prompting the same jacket zipper for the sixth time on WAN 2.2. Clean motion, great physics — but that zipper kept dissolving into fabric mush every single render. Someone dropped LTX 2.3 in my Discord. "18x faster, native portrait, audio built in." Eye-roll. But it was late and I had nothing to lose.Same prompt. Under a minute. Zipper held.

That's how this comparison started. I spent the next week running both models through identical prompts on the same hardware. Here's what actually matters.

Why This Comparison Matters Right Now

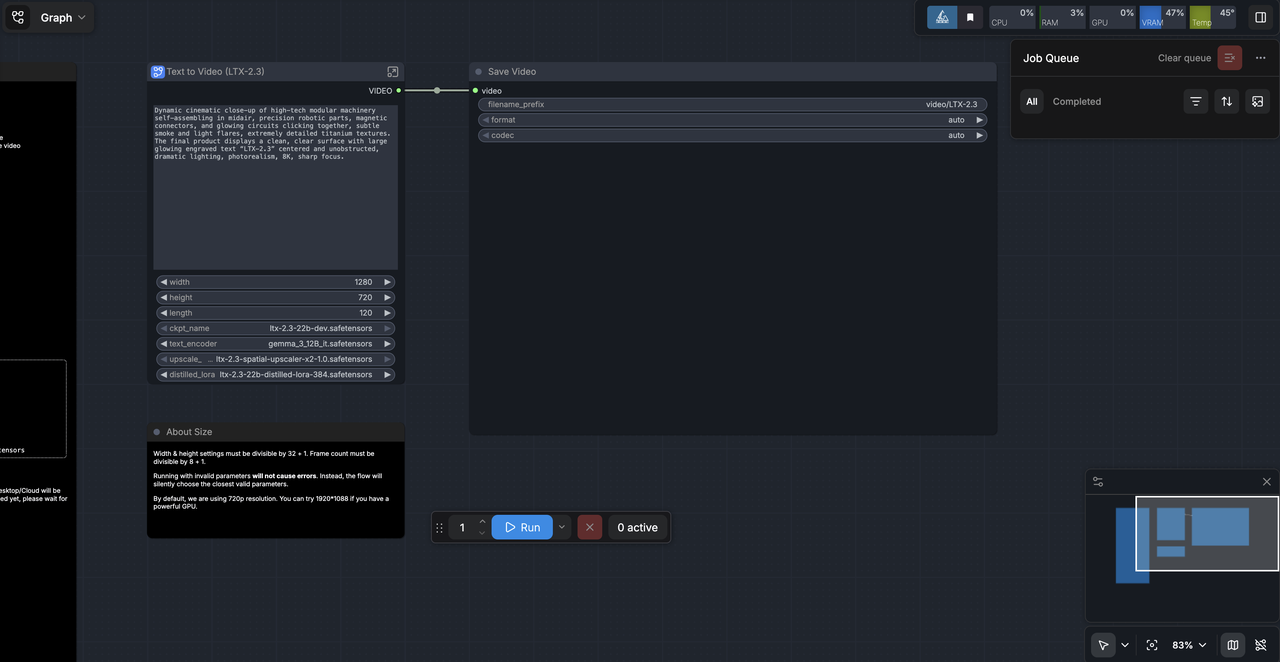

The open-source AI video space just had a serious shakeup. In March 2026, Lightricks released LTX-2.3 — a 22-billion-parameter model generating synchronized audio and video at resolutions up to 4K at 50 frames per second. Zenvanriel Meanwhile, WAN 2.2 — Alibaba's MoE-powered model released in 2025 — has been the community's go-to workhorse for cinematic motion.

For creators juggling 5–10 uploads a day, this choice isn't academic. It's workflow. Speed, VRAM budget, and output format all determine whether you hit publish before midnight or stay up chasing renders.

Side-by-Side Feature Table

Feature | LTX 2.3 | WAN 2.2 |

Architecture | DiT (Diffusion Transformer) | MoE (Mixture of Experts) |

Parameters | 22B | 14B (active: ~14B of 27B) |

Max Resolution | 4K / 1080p | 720p (native) |

Native Audio | ✅ Built-in, one-pass | ❌ Requires external tooling |

Native 9:16 | ✅ | ❌ |

License | LTX Model License (Apache 2.0 for <$10M revenue) | Apache 2.0 |

ComfyUI Support | ✅ Official nodes | ✅ Mature ecosystem |

Speed and Hardware Requirements

LTX 2.3 — Faster, But Needs Specific VRAM Config

Speed is where LTX 2.3 makes its biggest case. A 5-second clip at 720p that took roughly 4–5 minutes on WAN 2.2 completes in under a minute on LTX 2.3. Crepal That shift in rhythm — running three variants while your coffee cools instead of one before lunch — genuinely changes how you iterate.

VRAM reality check: The Windows version of LTX Desktop requires a minimum of 32GB VRAM, 32GB RAM, and 60GB storage. That's the official app. With FP8 quantization, the community has pushed it down to 8GB — but expect slower outputs and tighter limits.

WAN 2.2 — More Flexible Hardware Support?

WAN 2.2 actually wins here for lower-spec setups. The TI2V-5B variant achieves 720p output at 24fps in under ~9 minutes on an RTX 4090 — among the fastest for that resolution on consumer hardware. NYU Shanghai RITS The 1.3B model can run on even lighter cards, making WAN 2.2 the realistic choice if you're on 8–12GB VRAM.

The 18x Speed Claim — What It Actually Means in Practice

Okay, let's be real about this number. LTX-2 demonstrates a clear performance advantage, delivering dramatically higher step throughput than WAN 2.2 14B under identical generation settings on H100. LTX But an H100 benchmark doesn't translate 1:1 to your local RTX. What you'll actually feel is the difference between "I can test 15 prompt variations before a deadline" and "I'm committing to this one."

Visual Quality and Motion Consistency

Text-to-Video Output Quality

WAN 2.2 camera arcs felt intentional — not just "the camera moved." LTX-2.3 kept framing steady, which is great for product clips, but WAN 2.2 understood weight and drift the way directors talk about blocking.

If your prompt includes exact cinematography language — dolly, tilt, rack focus — WAN 2.2 tends to follow it more faithfully. LTX 2.3 wins on visual sharpness and texture rendering, especially for close-up product work.

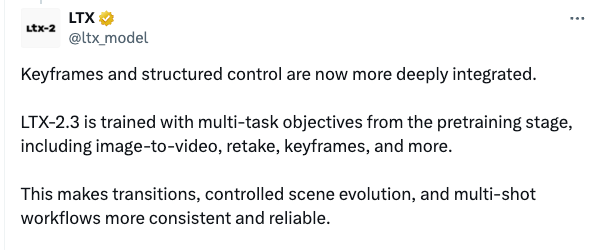

Image-to-Video Stability

This is where LTX 2.3 made the biggest leap from its predecessor. LTX-2.3 reportedly features less freezing, less Ken Burns effect, and more realistic motion — with better visual consistency from the input frame, meaning fewer generations to throw away.

WAN 2.2 has always been strong at I2V, but texture drift on fine details (zippers, fabric weave, small text) was a known pain point. LTX 2.3's updated VAE addresses this directly.

Vertical Video and Social Format Support

LTX 2.3 Native 9:16 vs WAN 2.2

For TikTok and Reels creators, this is the deciding factor. LTX-2.3 can generate vertical video directly at native 9:16 — especially useful for YouTube Shorts, Instagram Reels, and TikTok — instead of generating landscape video and cropping it later.

WAN 2.2 has no native portrait mode. You're cropping from landscape, which means lost resolution and awkward reframing. For anyone producing 5+ vertical clips a day, LTX 2.3's native support alone might justify the switch.

Audio Generation

LTX 2.3 In-Generation Audio Sync

This is LTX 2.3's most underrated advantage for everyday creators. The model generates ambient sound, dialogue, and effects in the same pass as the video. No routing to a separate audio node. No DAW session afterward. For draft reviews and quick client turnarounds, the time savings add up fast.

WAN 2.2 Audio Capabilities

WAN 2.2 has no native audio generation. WAN 2.2 requires routing through an audio node or adding sound after the video render — an extra workflow step that LTX 2.3 eliminates entirely. For creators who already have audio pipelines, this might not matter. For solo operators running lean, it does.

Licensing and Commercial Use

LTX 2.3 Apache 2.0 vs WAN 2.2 License Terms

Both models are commercially usable — but the terms differ. LTX-2.3 is free for companies with under $10M in annual revenue under the LTX Model License, with a separate commercial licensing program for larger enterprises.

WAN 2.2 is simpler. The Wan2.2 series models are based on the Apache 2.0 open-source license and support commercial use — freely allowing use, modification, and distribution, including for commercial purposes, as long as you retain the original copyright notice and license text.

For most independent creators and small studios, both are effectively free to use commercially. Larger companies building products on top of LTX 2.3 should review the licensing tier carefully.

Which One Fits Your Workflow?

Solo Creators Prioritizing Speed

Pick LTX 2.3 if you're producing short-form social content, need native 9:16 output, want audio baked in without extra steps, and are running on a newer high-VRAM GPU. The official LTX-2.3 model page has the full spec breakdown and API access info.

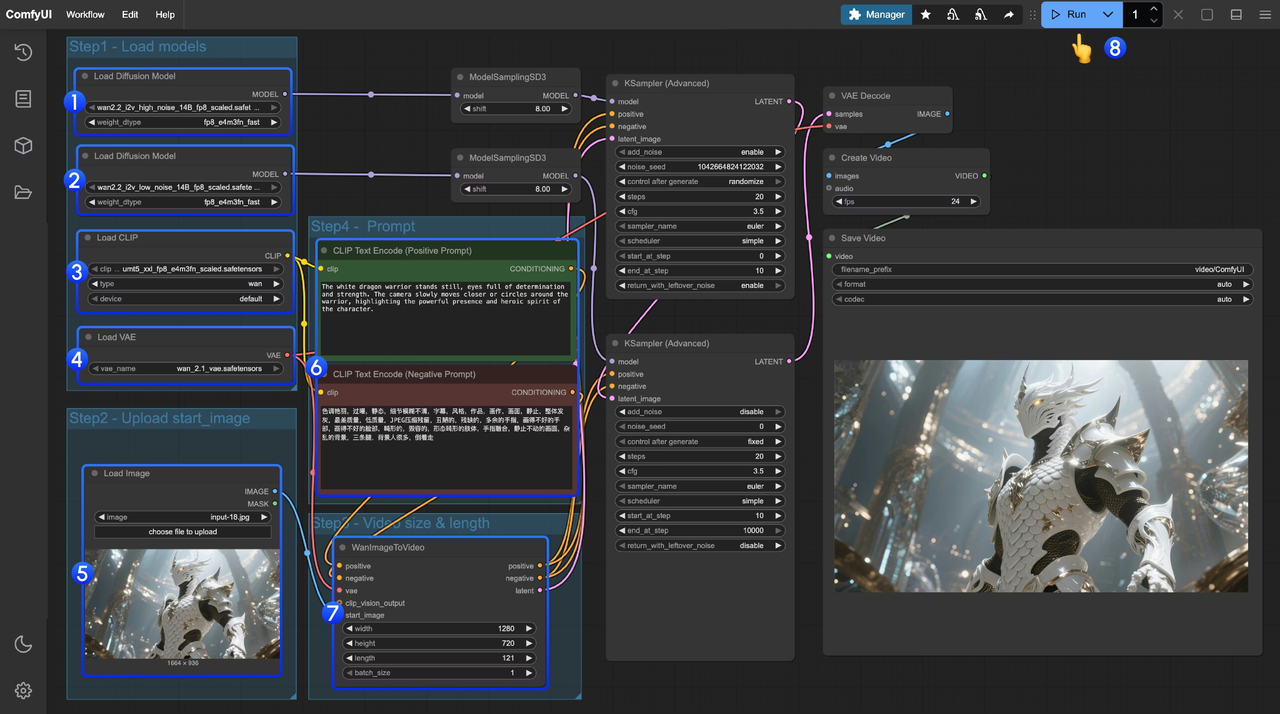

Teams Needing Flexibility and Hardware Options

Pick WAN 2.2 if cinematic motion quality is non-negotiable, you're working on lower-spec hardware, or your team already has an established ComfyUI workflow. For ComfyUI setup details, the official Wan2.2 ComfyUI workflow guide covers everything from model download to node configuration.

Both models are available on Hugging Face. For a deeper technical breakdown of WAN 2.2's MoE architecture and training data, the NYU Shanghai research overview is worth bookmarking.

FAQ

Is LTX 2.3 really 18–19x faster than WAN 2.2?

On high-end hardware like H100s under controlled benchmarks, the speed gap is significant. In real-world testing on a consumer RTX 4090, the difference is real but closer to 5–8x depending on resolution and scheduler settings. What matters more than the number: you can iterate dramatically faster with LTX 2.3, which changes how you work regardless of the exact multiplier.

Which model has better image-to-video consistency?

LTX 2.3 made significant improvements here over its predecessors. Textures like denim, linen, and brushed steel improved the most, with far less "texture drift" every 8–10 frames — and small objects like watch hands and buttons hold shape longer before melting into their surroundings. WaveSpeedAI For stable I2V with fine details, LTX 2.3 now edges ahead.

Can I use both LTX 2.3 and WAN 2.2 commercially?

Yes — with caveats. WAN 2.2 is straightforwardly Apache 2.0. LTX 2.3 is free commercially for projects under $10M annual revenue; above that, a licensing agreement is required. Always check the current terms before shipping a product built on either model.

Which is easier to run locally?

WAN 2.2 wins for accessibility. The 1.3B and 5B variants run on 8–12GB VRAM cards. LTX 2.3's full model needs 32GB+ for the official desktop app, though quantized community builds work on lower specs with reduced quality. For a practical guide to running LTX 2.3 on cloud GPU infrastructure, DigitalOcean's setup tutorial is one of the clearest walkthroughs available.

Should I switch from WAN 2.2 to LTX 2.3?

If you create vertical social content, need built-in audio, or live and die by iteration speed — yes, seriously consider it. If cinematic motion quality is your primary output metric and you have an existing WAN 2.2 workflow dialed in — not necessarily. Many creators run both: LTX 2.3 for fast drafts and social formats, WAN 2.2 for final cinematic outputs.

Conclusion

LTX 2.3 is the better tool for speed, social format output, and streamlined audio workflows. WAN 2.2 is still the standard for cinematic motion realism and flexible hardware support.

Neither model is objectively superior — they just have different strengths. If you're a solo creator batch-producing content with a tight turnaround, LTX 2.3's native 9:16, one-pass audio, and iteration speed make it the practical choice in 2026. If you're producing narrative or cinematic work where every camera move matters, WAN 2.2 still delivers a feel that's hard to replicate.

The real answer? Try both. The open-source advantage is that you can.

Previous posts: