NemoClaw vs NVIDIA NeMo: Which Do You Actually Need?

Hello, everyone. I'm Dora. I saw someone ask in a Discord yesterday: "Should I learn NemoClaw or NeMo for AI video work?" And I thought, "That's like asking if you should learn a hammer or a toolbox." They're not competing options. They're different layers of the same stack, and most creators will never touch either one directly.

But the confusion is real. Both names start with "Nemo," both come from NVIDIA, and both involve AI. So let me break down what each one actually is, where they sit in the ecosystem, and which one — if either — you should care about as a video creator.

Why People Confuse These Two

Same NVIDIA naming

NVIDIA loves the "Nemo" branding. There's NVIDIA NeMo (the framework), NemoClaw (the agent platform, not even launched yet), Nemotron (foundation models), and BioNeMo (life sciences tools). They're all part of the same ecosystem, but they do completely different things.

The naming overlap makes it easy to assume they're versions of the same product. They're not. NeMo is infrastructure for building AI models. NemoClaw is infrastructure for deploying AI agents. One helps you train models, the other helps you automate workflows. For a clearer breakdown of what NemoClaw actually is and how it differs from other agent platforms, our dedicated guide covers its positioning for video teams specifically.

Different layers of the stack

Think of it like this: NeMo is where researchers build the AI models that power tools like ChatGPT or Claude. NemoClaw is where companies deploy those models to actually do work — like sorting files, responding to emails, or automating repetitive tasks.

If you're a video creator, you don't build models and you probably don't deploy enterprise agents either. You use finished tools — CapCut, Descript, Runway — that were built by people who might have used NeMo or NemoClaw. In practice, creators interact with AI through workflow tools that automate editing and production rather than the underlying infrastructure. (see how AI video editing automation works).

But you're three layers removed from that infrastructure.

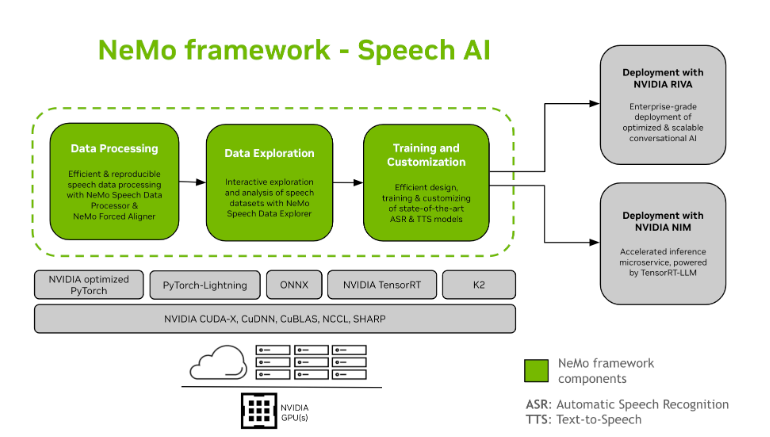

What NVIDIA NeMo Actually Is

Framework and training side

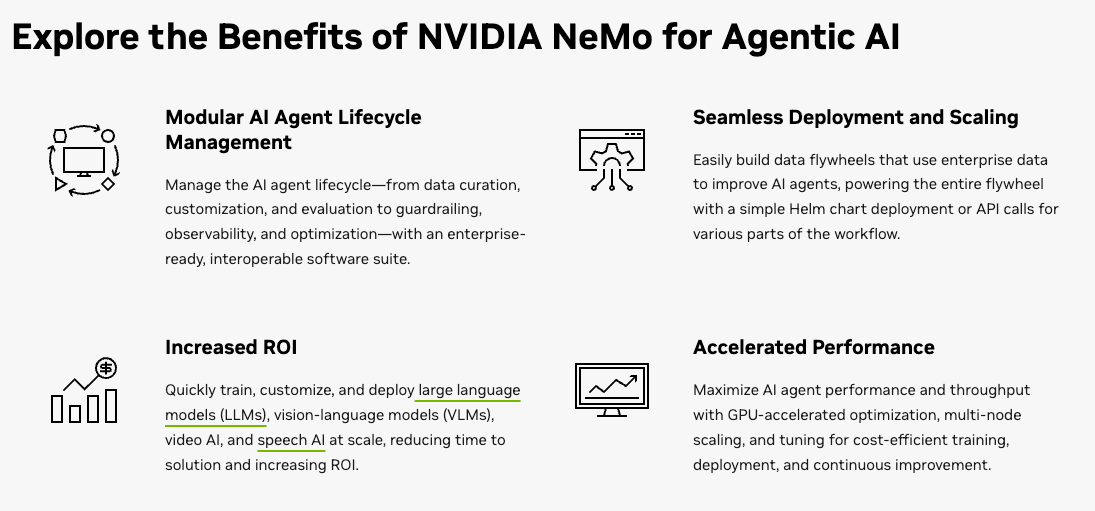

NVIDIA NeMo Framework is an end-to-end platform for building, customizing, and deploying generative AI models. It supports large language models (LLMs), multimodal models, computer vision, automatic speech recognition (ASR), and text-to-speech (TTS).

In practice, it's what AI researchers and ML engineers use when they need to:

Train custom models on proprietary data

Fine-tune existing models for specific domains

Build speech-to-text or text-to-speech systems

Deploy models into production environments

According to NVIDIA's official NeMo documentation, NeMo handles everything from data curation to distributed training across thousands of GPUs. It's built on top of PyTorch and integrates with NVIDIA's Megatron Core library for massive-scale model training.

Agent Toolkit and lifecycle

NeMo also includes tools for managing the full AI agent lifecycle — from data curation and customization to monitoring and optimization. That's where the confusion with NemoClaw starts, because both platforms touch "agent" territory. But NeMo's agent toolkit is about managing the model side (training, evaluation, deployment), not the workflow automation side (executing tasks, coordinating actions).

Who uses it today

Companies building custom AI models. For example, Amazon used NeMo Framework and NVIDIA GPUs to train Amazon Titan foundation models. ServiceNow uses it to build custom LLMs for workflow automation. SK Telecom trained billion-parameter models with NeMo for smart speakers and call centers.

If you're not training models from scratch or fine-tuning LLMs on proprietary datasets, you're not using NeMo. It's infrastructure for builders, not end users.

What NemoClaw Appears to Be

Agent platform positioning

NemoClaw is NVIDIA's upcoming open-source platform for deploying AI agents that automate tasks for enterprise teams. According to reports from CNBC and Wired on March 10th, 2026, NVIDIA has been pitching NemoClaw to enterprise software companies — Salesforce, Cisco, Google, Adobe, CrowdStrike — ahead of its GTC 2026 conference.

The pitch: let companies dispatch AI agents to perform tasks for their employees. File organization, data extraction, workflow automation, multi-step processes. The kind of stuff that eats up hours but doesn't require creative thinking. For everything we know about the platform before launch, including enterprise partnerships and expected features, see our comprehensive pre-launch analysis.

How it differs from model tooling

NeMo builds models. NemoClaw deploys agents that use those models to actually do work.

Here's the clearest way I can explain it: NeMo is like the factory that builds car engines. NemoClaw is like the platform that lets companies deploy self-driving cars to run deliveries. One makes the core technology, the other orchestrates how that technology gets used in real-world workflows.

For video teams, this would mean agents that:

Sort and tag raw footage automatically

Generate rough transcripts with speaker labels

Organize assets by project, scene, or usability

Route files between platforms (upload to Dropbox, notify team on Slack, archive in cloud storage)

But here's the catch: as of March 10th, 2026, NemoClaw isn't publicly available. No beta access, no sign-up page, no confirmed features. Everything we know comes from leaks and pitches to enterprise partners. It might launch at GTC 2026 on March 16th, but even then, it'll likely be for enterprise partners who contribute code — not solo creators.

Core Differences at a Glance

Aspect | NVIDIA NeMo | NemoClaw |

Purpose | Build and train AI models | Deploy AI agents for task automation |

Status | Live, actively developed (version 2.6.1 as of Feb 2026) | Pre-launch, expected mid-March 2026 |

Target user | ML engineers, AI researchers, enterprise AI teams | Enterprise software companies, workflow automation teams |

Deployment | Cloud or on-premise, GPU-optimized | Cloud or on-premise, hardware-agnostic |

What you build | Custom LLMs, speech models, multimodal models | Autonomous agents that execute multi-step tasks |

Access model | Open-source (Apache 2.0), free to use | Open-source with early partner access |

Which One Should You Watch?

If you build models

Use NeMo. If you're training custom LLMs, building domain-specific speech recognition systems, or fine-tuning models on proprietary data, NeMo Framework is the platform you want. It's production-ready, well-documented, and actively maintained by NVIDIA.

You'll need Python 3.10+, PyTorch 2.6+, and access to NVIDIA GPUs for training. The learning curve is steep — this isn't a drag-and-drop tool. You're writing code, configuring distributed training setups, and managing model checkpoints.

If you automate workflows

Wait for NemoClaw or use existing agent tools. If you're looking to automate repetitive workflow tasks — file sorting, data extraction, multi-step processes — NemoClaw is positioned for that. But it's not available yet.

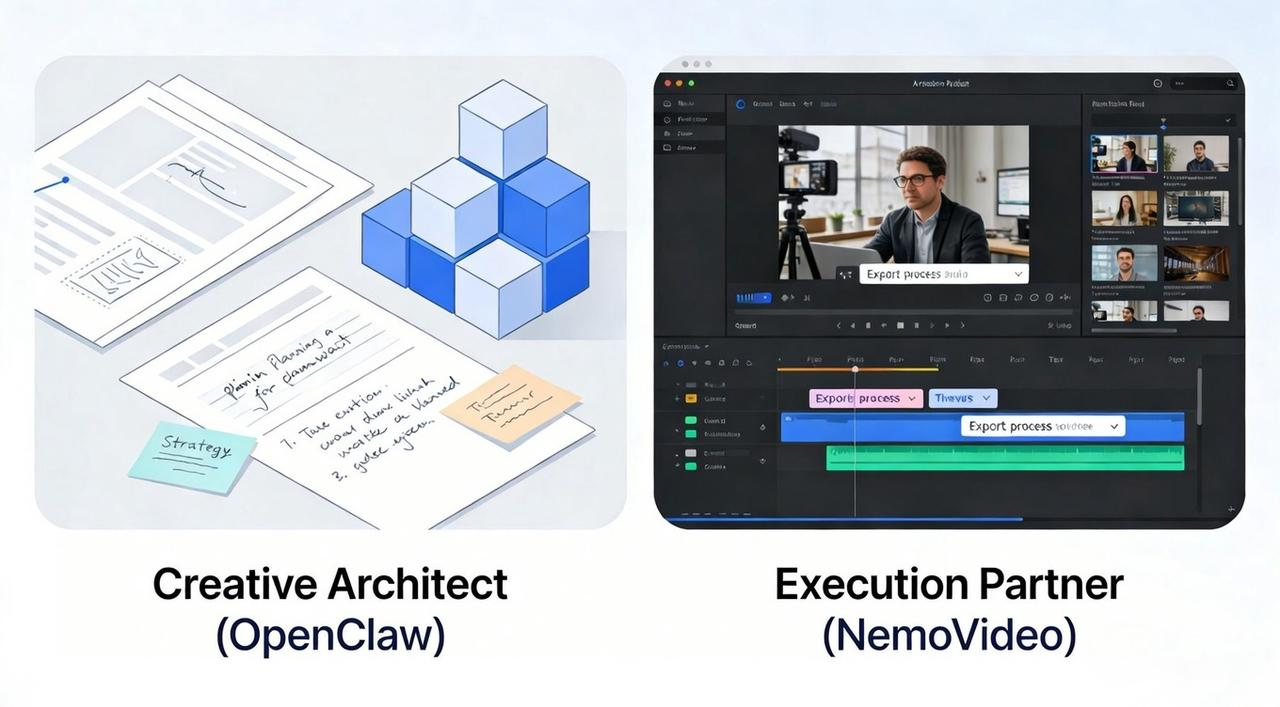

In the meantime, existing tools like Zapier, Make, n8n, or agent frameworks like LangChain handle workflow automation today. Some creator pipelines already combine agent systems with automated video generation workflows (for example this OpenClaw-based video automation pipeline).

They're not as powerful as what NemoClaw promises (enterprise-grade security, GPU-accelerated agents, deep NVIDIA ecosystem integration), but they work right now.

If you create videos

Neither. You're not the target user for either platform.

NeMo is for people building AI models. NemoClaw is for companies deploying enterprise agents. As a video creator, you're waiting for the tools built on top of these platforms. That's where the actual usability lives.

What to watch instead: tools that integrate NeMo-trained models or NemoClaw-style automation into creator-friendly interfaces. Many of these tools focus on turning existing content into video formats automatically — for example systems that convert articles or scripts directly into short-form videos (see how blog-to-video AI workflows work).

The reality is that finished AI video platforms like NemoVideo already deliver the automation benefits without requiring you to manage infrastructure or deploy agents yourself.

For example:

Video editors that use NeMo-trained speech models for better auto-transcription

Asset managers that deploy NemoClaw-style agents to organize footage automatically

Workflow platforms that chain AI tasks (rough cut generation, scene detection, metadata tagging) without you touching code

Those tools don't exist yet — or if they do, they're in early beta. But they're what will actually change creator workflows over the next 12 months. The infrastructure (NeMo, NemoClaw) is the foundation. The consumer tools built on that foundation are what creators will actually use.

My take as of March 10th, 2026:

I'm not learning NeMo. I'm not waiting for NemoClaw access. I don't build models and I don't deploy enterprise agents. What I'm watching is which tools I already use — CapCut, Descript, Frame.io — start absorbing the capabilities these platforms enable.

If CapCut suddenly gets way better at auto-generating edits because they integrated a NeMo-trained model, I'll notice. If Descript adds workflow agents that sort my footage automatically because they built on NemoClaw's enterprise-grade security infrastructure, I'll use it. But I won't interact with the underlying platforms directly.

That's the honest answer for most creators. NeMo and NemoClaw are enterprise infrastructure. The value trickles down through finished products. Watch for those products, not the platforms themselves.

What Creators Should Use Instead

Instead of waiting for enterprise infrastructure tools to become accessible, smart creators are already using finished AI video platforms that deliver the automation benefits today.

NemoVideo's Workspace gives you the agent-like automation and AI-powered editing that NemoClaw promises — but without needing to deploy enterprise agents or write code:

SmartPick (AI Rough Cut) — Automatically scrubs through footage, removes filler words, and extracts highlights

Smart Caption — Auto-transcribes with trending subtitle styles in one click

Viral+ Studio — Reverse-engineers high-performing content patterns

Inspiration Center — Generates data-backed hooks and scripts

Talk-to-Edit — Describe changes in plain language, no timeline required

Multi-platform optimization — One video, every format: TikTok, Reels, Shorts, LinkedIn

This is what "AI infrastructure for creators" actually looks like in practice — not NeMo or NemoClaw, but finished tools that use similar capabilities in a creator-friendly package.

Compare Your Options

Still evaluating workflow automation tools? See how different approaches stack up:

NemoVideo vs CapCut — AI-first editing vs traditional timeline tools

OpenClaw automation workflows — Agent-based automation for technical users

Is NemoVideo free? — Pricing and feature tiers explained

The bottom line: Don't wait for NemoClaw access you'll never get. Use the creator tools that deliver the same automation benefits right now.

👉 Start with NemoVideo — free workspace, no credit card needed