How to Use Wan 2.7 First and Last Frame Control

Hi, I'm Dora. I'll be honest — the first time I heard about "first and last frame control" in an AI video model, I rolled my eyes a little.

I'd been burned before. Tools that promised "precise output control" and delivered something that technically started with my image... and then immediately did whatever it wanted for the next 8 seconds. The gap between the promise and what I got out the other end was embarrassing.

So when I started testing Wan 2.7's first/last frame feature — officially called FLF2V (First-Last Frame to Video) — I went in skeptical. I ran 20+ generations over several days. Some were clean. Some were disasters. And now I have actual opinions.

Here's what I found.

What First and Last Frame Control Does in Wan 2.7 — And Why It's Different From Normal I2V

Standard image-to-video (I2V) gives the model a starting frame and says "go." You get motion, but where it ends up? That's the model's call. Sometimes it's great. Sometimes your product floats off screen. Sometimes your character is just... evaporates.

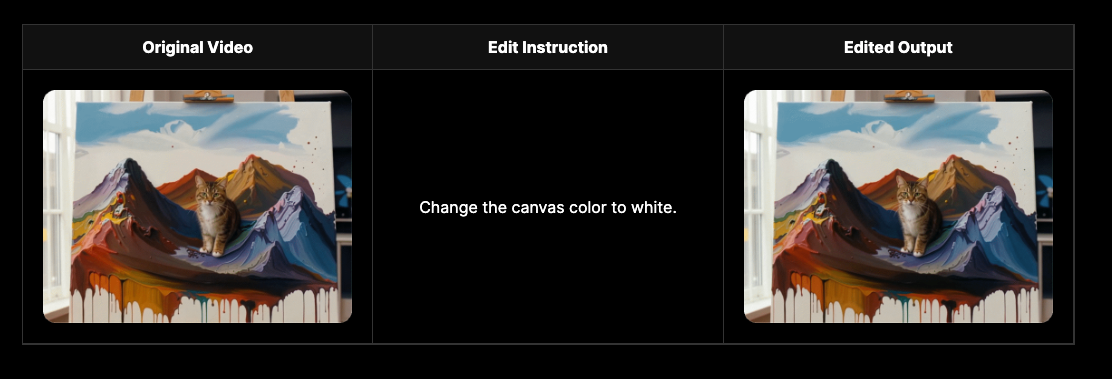

First/last frame control changes the contract. You give the model two images — one for the opening frame, one for the closing frame — and it generates the motion and transition between them. The model has to figure out how to get from Point A to Point B in a way that looks natural.

This is not just a filter on top of I2V. FLF2V uses a conditioning mechanism that encodes both images into the model's latent space alongside your text prompt simultaneously — so the "destination" isn't an afterthought. It's baked into every step of the generation.

Why does that matter for creators? Because now you can design a specific opening and a specific closing shot. Product in box → product out of box. Person standing still → person mid-jump. Calm landscape → storm. You define the story arc in two images. The AI handles the in-between.

Why This Matters for Short-Form Video Hooks

If you're making TikTok or Reels content, the first 1–2 seconds are everything. But the last frame matters too — it's what viewers see when they pause, screenshot, and share.

What I kept running into with standard I2V was that my carefully designed opening frame would get respected, but the video would drift by second 4 and end on something I didn't plan. That made looping harder and killed thumbnail control.

With FLF2V, I can now engineer a hook structure that looks intentional. Opening frame with high visual contrast → closing frame with the payoff. Think: before/after reveals, product unboxing finales, emotional character arcs within a 6-second clip. This maps directly to the opening sets curiosity, the close delivers the payoff — and for TikTok/Reels, both frames need to be planned, not accidental.

The pattern I've found most reliable: use a visually busy or mysterious start frame, and a clean/satisfying end frame. The model tends to generate calmer motion when it has a "settled" destination to aim for.

Before You Start — What You Need

Don't skip this section. This is where most people waste their first 10 generations.

Image format: PNG or JPG, both work. PNG gives you slightly cleaner edges if your subject has fine details (hair, fabric texture).

Resolution and aspect ratio: This is critical. Your start and end images need to match each other in aspect ratio. If you're targeting 9:16 vertical output for TikTok or Reels, crop both images to 9:16 before uploading. I cannot stress this enough — mismatched ratios produce warped, stretched transitions that look terrible. The z-image.ai Wan guide recommends keeping your input images at the exact aspect ratio of your target output, since the image acts as the primary visual anchor for the entire generation.

Recommended resolution for reference images: 720p or above. Low-res reference images produce low-confidence transitions — the model fills in detail inconsistently and you get flicker.

One more thing: Start and end images should share consistent lighting and color temperature. If your start frame is warm golden light and your end frame is cold blue, the transition will look jarring unless you specifically prompt for a lighting shift.

Step-by-Step: Using First/Last Frame Control in Wan 2.7

Step 1 – Prepare Your Start and End Reference Images

Go into whatever image editor you use and crop both images to the same ratio. For vertical video: 1080×1920 or 720×1280. For landscape: 1920×1080.

I prep both images side by side on my screen before uploading anything. I want the two frames to feel like they belong in the same world — same color grade, same light direction. If they look like they came from different photo shoots, the AI will struggle.

Step 2 – Write Your Motion Prompt

Here's where people underinvest time. The motion prompt doesn't describe your images — it describes what happens between them. Think of it as directing a camera operator, not describing a scene.

Structure I use:

[Subject] + [motion/action] + [camera movement] + [mood/lighting] + [style]

Example:

"The matte product box slowly rotates clockwise while a soft light sweeps left to right across its surface, the camera gently pushing in, cinematic 24fps, shallow depth of field"

Keep prompts specific to motion. Don't re-describe what's already in your images. And add a negative prompt with: "jump cut, flicker, distortion, warping, morphing, artifacts"

Step 3 – Set Duration and Resolution

For short-form hooks, I default to 4–6 seconds. Long enough to feel like motion, short enough to loop.

Next Diffusion's ComfyUI FLF2V tutorial notes that pushing beyond 120 frames can cause a "pingpong" effect where the model reaches the end frame too early and starts reverting — a real limitation to watch for. Stick to shorter clips unless you have a compelling reason to go long.

Resolution: 1080p for final output, 720p for quick tests. Don't burn credits on 1080p while you're iterating the prompt.

Step 4 – Generate and Evaluate the Output

First generation is almost never final. Here's my eval checklist:

Subject consistency: Does the main subject stay recognizable from frame to frame?

Transition naturalness: Does the motion look like it could have happened in real life?

End frame accuracy: Does the video actually land on (or near) your end reference image?

Artifacts: Any flicker, morph, or warp in the middle section?

If the transition feels "rushed" — like the model is cramming too much change into the last 2 seconds — your start and end frames are probably too dissimilar. Bridge the gap by making the images compositionally closer.

Step 5 – Export and Adapt for Platform

Export as MP4. For TikTok, make sure you're at 9:16 with H.264 encoding. For YouTube Shorts, same ratio works. For Instagram Reels, same.

One thing I do: export a still of the last frame and use it as my thumbnail separately. Since you designed that frame intentionally, it makes a much stronger thumbnail than whatever the platform auto-grabs.

Common Mistakes and How to Fix Them

Problem: Transition looks unnatural, frames "jump" mid-clip Fix: Your start and end images are too compositionally different. Either bring them closer together, or add a motion prompt that explicitly describes a gradual change ("slowly," "gently," "subtle camera drift").

Problem: Motion doesn't match reference images Fix: Check aspect ratios first. This is the #1 cause. If ratios match and the issue persists, your prompt may be overriding the visual conditioning — simplify the motion prompt.

Problem: End frame isn't being respected Fix: This happens when the model "overshoots" toward the end frame too fast, then has nowhere to go. Shorten clip duration, or add low-noise settings if you're in ComfyUI. Using the high-noise model variant tends to produce more creative interpolation but worse end-frame fidelity.

Problem: Middle of the clip has visual artifacts Fix: This is often a VRAM/quality issue, not a prompt issue. Try at a slightly lower resolution for your draft, then upscale the final.

Limitations to Know

I want to be direct about where this breaks down, because no one needs another article that pretends AI tools are magic.

Content type boundaries: FLF2V works best when there's a single clear subject with relatively similar composition in both frames. Group scenes with multiple characters, or complex backgrounds, produce much more inconsistent results. The model has a harder time maintaining identity consistency for multiple subjects simultaneously.

Fine detail consistency: If your start image has small text, logos, or intricate patterns, those details will likely drift or distort mid-clip. The model doesn't "read" your reference frames with pixel-level accuracy — it works in latent space, which means fine details are approximate.

Very fast motion: If the motion you're implying between frames requires rapid movement (a person mid-sprint, for example), the model tends to produce blurry, artifact-heavy clips. FLF2V performs best with slow-to-medium motion — camera pans, gentle object movement, subtle environmental changes.

Free plan limitations: On most hosted platforms, FLF2V at 1080p is a paid feature or requires significant credits. Run your test iterations at 720p.

FAQ

What image formats work for frame references? PNG and JPG both work reliably. Avoid WebP unless the platform specifically supports it, as some implementations convert it in ways that affect quality.

Can I use this for vertical 9:16 video? Yes — and for TikTok/Reels, it's arguably the primary use case. Just make sure both reference images are already cropped to 9:16 before uploading. As noted by resources covering Wan 2.7 on Dzine, all outputs can render at 1080P up to 15 seconds, with 9:16 fully supported.

Is there a clip length limit when using frame control? Technically no hard cap, but practically — beyond 6–8 seconds, FLF2V transitions get less reliable. The model struggles to maintain smooth motion over long durations while respecting both frame anchors. Keep it short for hooks.

Does this feature work on the free plan? Depends on your platform. Most free tiers support it at 720p with limited generations per day. Check your specific platform's plan details.

How do I fix motion mismatch between start and end frames? Usually an aspect ratio issue first (check that). If ratios match, simplify your prompt — a long, complex motion prompt can override the frame conditioning. Also try adding "slow" and "gradual" to your motion description.

Bottom Line

Am I using FLF2V in my regular workflow now? Genuinely, yes.

It changed how I think about planning video content. Instead of generating and hoping, I now mock up two stills in Canva or Photoshop — my planned hook frame and my planned close frame — and use those as the generative input. My approval rate (the percentage of generations I actually use without heavy rework) went from around 30% to closer to 60%.

That said — if you're expecting the AI to perfectly match your reference frames frame-for-frame at fine detail levels, you'll be frustrated. Think of FLF2V as a strong creative direction tool, not a pixel-perfect compositor. Use it to define the arc. Expect some drift in the middle. That middle section is still where the AI is improvising.

Worth testing if you're serious about hook design. The control it gives you over narrative structure in a 4–6 second clip is real — and for short-form creators, that's the whole game.

Previous Posts:

Understand the full differences between Wan 2.7 and Wan 2.6 before using advanced features

Explore Wan 2.7 features for short-form video creation and hook design

Discover the best AI video generators for building controlled creative workflows

See how Runway alternatives compare for more flexible video generation control

Learn how to automate your video workflow with AI agents at scale