What Is HappyHorse-1.0? AI Video Model Ranking, Access, and What's Verified

Tuesday. I was mid-way through updating my weekly benchmark notes — the kind of tedious ritual I've done so many times I barely look at the screen anymore — when the leaderboard stopped making sense.

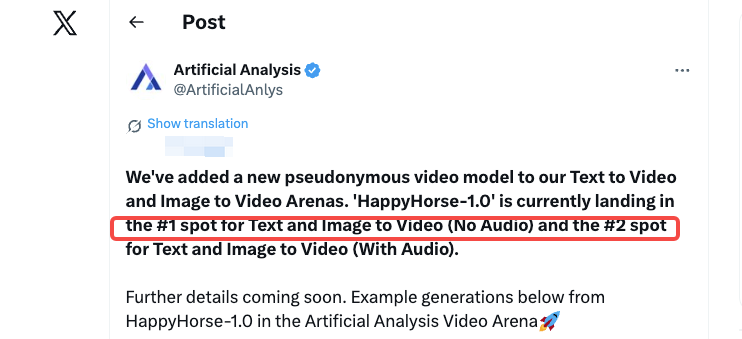

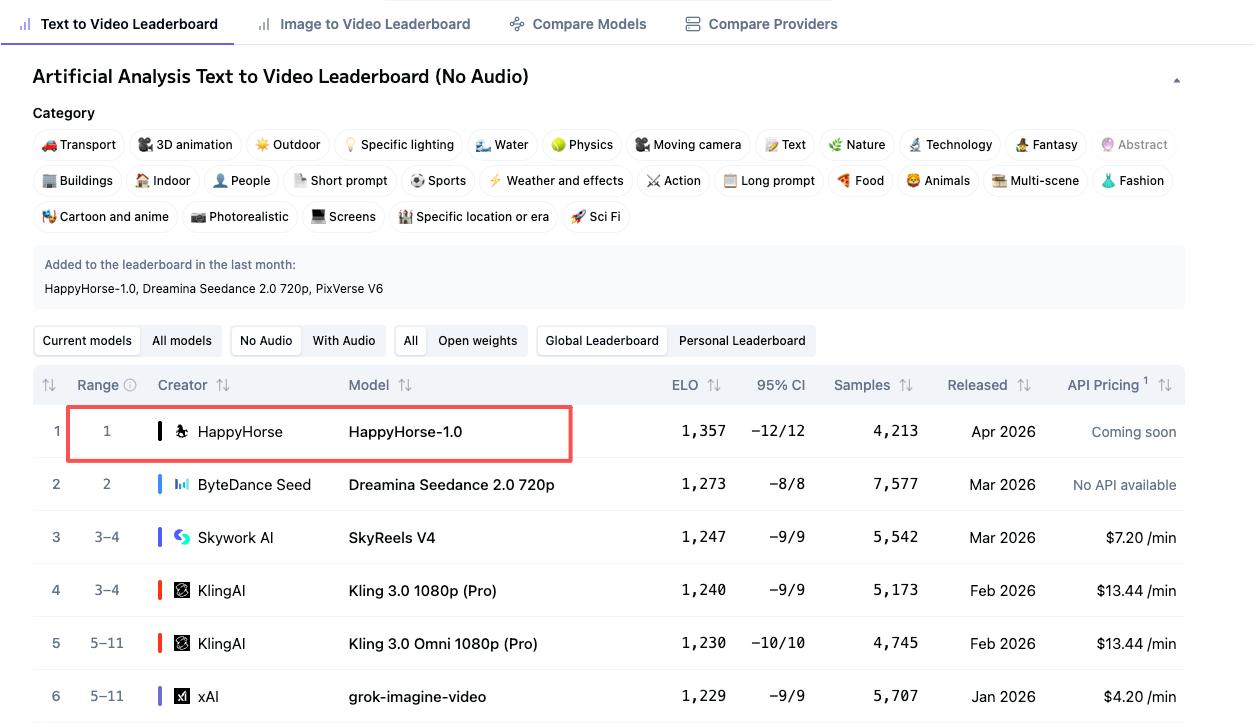

Artificial Analysis had quietly added a new pseudonymous video model, "HappyHorse-1.0," landing at #1 for both Text to Video and Image to Video (No Audio), and #2 for both categories with audio. No announcement. No paper. No LinkedIn post. Just a name and a score that shouldn't exist if you'd looked at the same table 48 hours earlier.

I closed the tab, reopened it. Still there.I spent the next few hours digging. Some of what I found is solid. Some of it I genuinely cannot confirm. This article separates those two piles — clearly — because I'd rather you know what's unverified than have you act on something pieced together from speculation. Hello, I’m Dora – glad to be here!

If you make short-form video for a living, this model matters. Here's what's actually going on.

What Is HappyHorse-1.0?

HappyHorse-1.0 is an AI video generation model that appeared on the Artificial Analysis Video Arena leaderboard in early April 2026 under a pseudonymous name, with no public technical paper and no confirmed developer identity at the time of publication.

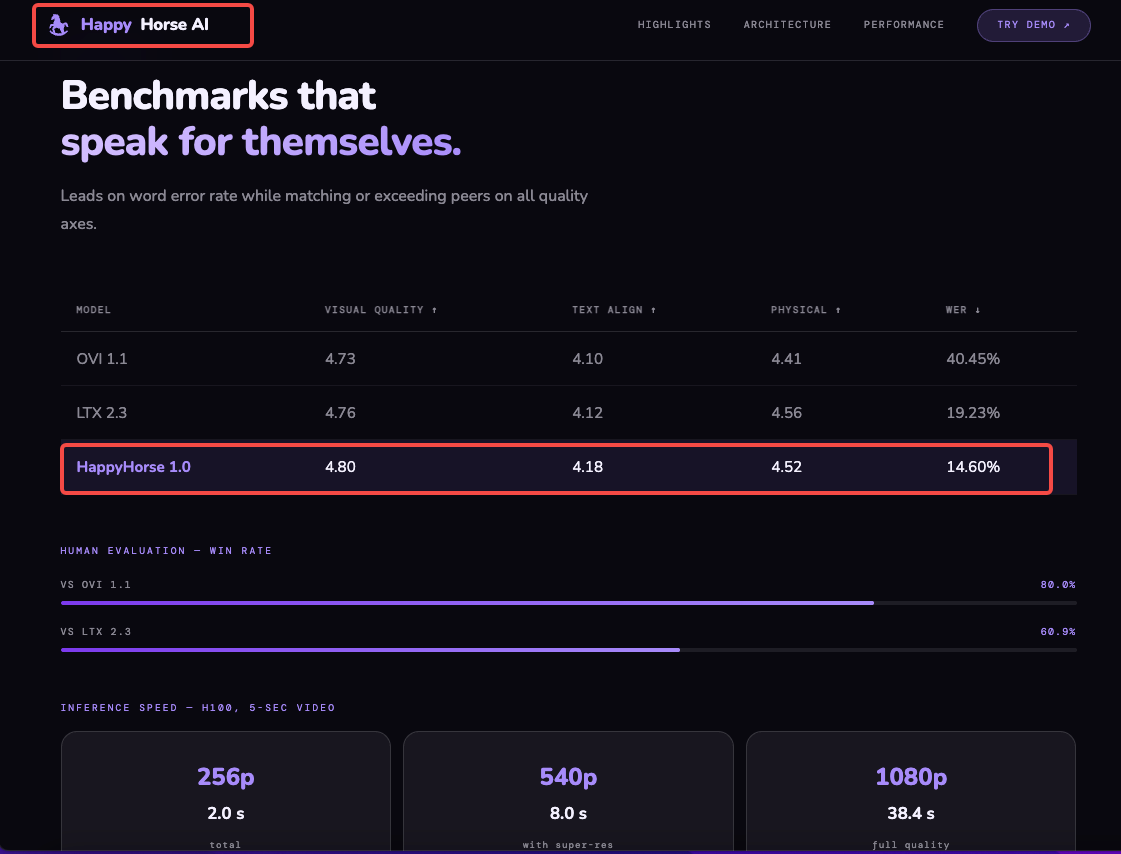

What makes it notable isn't the branding — it's the scores. HappyHorse-1.0 holds an Elo score of 1333 for text-to-video without audio, ranking first ahead of Dreamina Seedance 2.0 at 1273 and SkyReels V4 at 1244. On image-to-video without audio, the gap is even larger: HappyHorse-1.0 scores 1392, with Seedance 2.0 at 1355 in second place.

The Elo system used here isn't self-reported. Rankings are derived from blind user votes — real people comparing two videos generated from the same prompt without knowing which model produced each one. Higher Elo scores indicate the model is preferred more often. That matters. This isn't a benchmark somebody gamed with cherry-picked prompts.

Where Did HappyHorse Come From?

The Artificial Analysis Leaderboard Debut

Over a weekend in early April 2026, HappyHorse appeared on the Artificial Analysis Video Arena as "Happy Horse V1" with a V2 variant — no announcement, no developer credits, no technical documentation attached.

The absence of context is unusual even by AI lab standards. Most models arrive with at least a blog post. HappyHorse arrived with a name and a score.

Who Built It? What's Known and What Isn't

Community observers quickly noted the model "definitely appears to be from Asia," with speculation pointing toward Wan 2.7 — but no confirmation has emerged. That's a community guess, not a sourced claim.

The official-adjacent website happy-horse.art claims a team identity and technical specs, but I could not independently verify who is behind it. The GitHub URL listed in the installation instructions (

github.com/happy-horse/happyhorse-1Current Rankings and What They Mean

Text-to-Video (Elo 1333 — #1 Without Audio)

In the text-to-video category without audio, HappyHorse-1.0 holds first place at Elo 1333, ahead of Seedance 2.0 at 1273, SkyReels V4 at 1244, Kling 3.0 1080p Pro at 1241, and PixVerse V6 at 1239.

That's not a marginal lead over Seedance. A 60-point Elo gap in a blind voting system is meaningful separation — it means HappyHorse won substantially more head-to-head comparisons.

Image-to-Video (Elo 1392 — #1 Without Audio)

In image-to-video without audio, HappyHorse-1.0 scores 1392, ahead of Seedance 2.0 at 1355, PixVerse V6 at 1338, grok-imagine-video at 1333, and Kling 3.0 Omni at 1297.

Image-to-video is where this model reportedly shines most. Users describe HappyHorse as "unusually good at camera drift, body movement, and atmosphere, which helps short scenes feel more cinematic." That tracks with the I2V score being higher than the T2V score — image-anchored generation seems to be a strength.

With-Audio Rankings (#2 Behind Seedance 2.0)

In text-to-video with audio, HappyHorse-1.0 sits second at Elo 1205, behind Seedance 2.0 at 1219. The gap is narrow — 14 points. Whether that closes or widens as more votes accumulate is an open question.

The audio-enabled category is where Seedance 2.0 currently holds an edge. That's the honest picture.

What's Verified vs. What's Unconfirmed

This is the section I'd want someone to write for me when I'm evaluating a new tool.

Confirmed: Leaderboard Data from Artificial Analysis

✅ HappyHorse-1.0 holds T2V #1 (no audio, Elo 1333) on the Artificial Analysis leaderboard

✅ HappyHorse-1.0 holds I2V #1 (no audio, Elo 1392) on the image-to-video leaderboard

✅ HappyHorse-1.0 holds T2V #2 (with audio, Elo 1205) behind Seedance 2.0

✅ Rankings are derived from blind human preference voting — not self-reported benchmarks

✅ A browser-accessible demo exists at happy-horse.net

Unconfirmed: Open Source Claims, 15B Parameters, GitHub/HuggingFace

❌ 15B parameters — cited by multiple third-party sites, not confirmed by a verifiable technical paper

❌ Open source status — claimed, but the GitHub repository URL returns 404 as of publication

❌ HuggingFace model weights — not publicly accessible at time of writing

❌ Developer identity — no named team, no verified affiliation; community speculation points toward an Asian AI lab, possibly connected to the Wan model lineage, but this is unverified

❌ "Wan 2.7" connection — community observers raised the possibility that HappyHorse could be Wan 2.7, describing it as "a sizeable leap from 2.6" if true — but no confirmation has emerged

My working stance: The leaderboard numbers are real. Everything else is claims or community inference until the developer releases something verifiable.

How to Access HappyHorse-1.0 Right Now

The most straightforward current access point is the browser demo at happy-horse.net, which lets you run text-to-video and image-to-video generations without a local setup.

The workflow is simple: write a prompt, add an optional image or video reference, tune output settings, and download a clip when the preview is ready.

Pricing isn't publicly posted in a clear, stable place — check the site directly before assuming free access continues. Credits-based models can change quickly.

For self-hosting: The GitHub installation instructions circulating online reference a repository that isn't live. Don't trust any third-party mirrors claiming to host model weights until an official release is confirmed. The Hugging Face Video Generation Arena Leaderboard remains a useful reference for tracking where HappyHorse stands as votes accumulate.

What This Means If You Make Short-Form Video

Okay, so where does this actually land for someone posting 5–10 videos a week?

The honest answer: the rankings suggest the motion quality is genuinely strong for image-anchored clips. If you're the kind of creator who starts from a static asset — a product photo, a brand image, a character render — and needs it to move convincingly, HappyHorse's I2V score is worth paying attention to.

The use cases that seem most relevant based on current output descriptions:

Product demo loops from still photography

Social content where camera movement and atmosphere matter more than lip-sync accuracy

Storyboarding and client preview work where polish isn't the priority but motion coherence is

What it probably isn't, yet: a daily production workaround. Until the model is accessible via a stable API with documented rate limits, pricing, and output consistency across batches, building a workflow around it is premature. Test it. Don't depend on it.

Limitations and Risks for Creators

Let me be direct about the things that would give me pause before integrating this into anything commercial.

Unknown provenance = unknown licensing. The open source claims are unverified. If you don't know who built it or under what license, you don't know whether commercial use of the output is actually permitted. Until a formal license is published and attributable to a named entity, proceed with caution for monetized content.

The browser demo is not a production pipeline. Browser-based demos can go offline, introduce rate limits, or change pricing without notice. That's not specific to HappyHorse — it applies to any tool in early access.

Audio performance isn't #1. If your content format requires accurate lip-sync or native audio generation, Seedance 2.0 currently holds a narrow edge in the with-audio categories. Worth testing both for your specific use case rather than defaulting to the overall #1.

No paper, no reproducibility. Without a technical release, you can't evaluate architecture decisions, training data, or safety filtering. That matters less for social clips; it matters more if you're building anything with faces or sensitive subject matter.

FAQ

Is HappyHorse-1.0 free?

A browser demo is currently accessible at happy-horse.net, but free access may be credit-limited. Pricing structures weren't publicly documented in a stable location as of April 2026 — check the site directly before making assumptions. Free daily credits appear to exist, but may require an account.

Is HappyHorse-1.0 really open source?

Claimed, but not yet verified. Multiple sites reference a 15B-parameter open-source release with commercial rights, base model weights, and inference code. The GitHub repository listed in the documentation returned a 404 at the time of writing. The HuggingFace repository was similarly unavailable. Treat open-source claims as pending until a confirmed public release appears.

Can I use HappyHorse output for commercial content?

Unknown. Commercial rights depend on the license, which requires a verified developer release to confirm. Until a named team publishes formal licensing terms, using outputs for monetized content carries risk. The open-source claims, if confirmed, reportedly include commercial rights — but "reportedly" is doing a lot of work in that sentence.

Does HappyHorse support vertical video?

Yes, based on available demos. Browser-based access supports multiple aspect ratios including 9:16 for vertical formats. That said, output quality across aspect ratios hasn't been systematically tested in the community yet — the leaderboard rankings are primarily based on standard formats.

Who is behind HappyHorse?

Unknown. Community speculation has pointed toward an Asian AI lab, with some observers suggesting a possible connection to the Wan model lineage. No team has publicly claimed the model. Artificial Analysis described it as "pseudonymous" when adding it to the arena. This is the most important unanswered question for anyone considering commercial use.

Conclusion

HappyHorse-1.0 is real in one specific sense: the leaderboard scores are real, the blind voting methodology is real, and the current #1 positions in text-to-video and image-to-video (no audio) are real. That's what's verified.

Everything else — the 15B parameters, the open-source release, the developer identity, the GitHub repo — remains unconfirmed as of this writing.

If you work in short-form video and rely on image-to-video generation, this is worth testing via the browser demo. If you're evaluating it for a production workflow or commercial project, wait until there's a verifiable technical release with clear licensing.

I'll be watching whether the GitHub/HuggingFace repositories go live and whether a named team claims the model. That's the moment this shifts from "interesting leaderboard result" to "tool worth building around."

Previous posts: